The speed of trust: How slow pages hurt your brand

Performance isn’t just a technical metric or a revenue lever. It’s a perception engine. Every delay subtly reshapes how users evaluate your credibility, professionalism, and trustworthiness. Once perception shifts, it’s difficult to reverse.

We’ve known for years that slow pages hurt conversion rates. They increase bounce. They reduce revenue. They drag down engagement.

But there’s another cost that’s harder to measure and potentially more damaging in the long term: Slow pages change how people feel about your brand.

How fast is fast enough? Rethinking web performance in pragmagical terms

In a world full of friction – slow lines, dropped calls, canceled flights – creating moments of digital flow can feel like a gift. "Fast enough" isn’t just about protecting conversion rates. It’s about creating experiences that preserve momentum, reduce friction, and feel almost invisible.

When it comes to web performance, how fast is fast enough?

It’s a question I’ve tried to answer in different ways over the years. But while preparing this talk for performance.now(), I decided to go back to first principles. I tried to look at the question with fresh eyes – to set aside the patterns I’ve accumulated after years of working in the performance space and ask myself: what does "fast" really mean?

The honest answer? It depends.

There are so many variables. Any attempt to reduce "fast enough" to a single number – 1.2 seconds, 2.5 seconds, 100 milliseconds – quickly falls apart. If you came here hoping for a universal threshold, I’m sorry to disappoint you.

Instead, I want to explore something more nuanced: what fast feels like and how we might bridge the gap between business pragmatism and user delight.

Because somewhere between those two lies what I like to call the "pragmagical".

NEW! A better way to quantify user happiness on your site

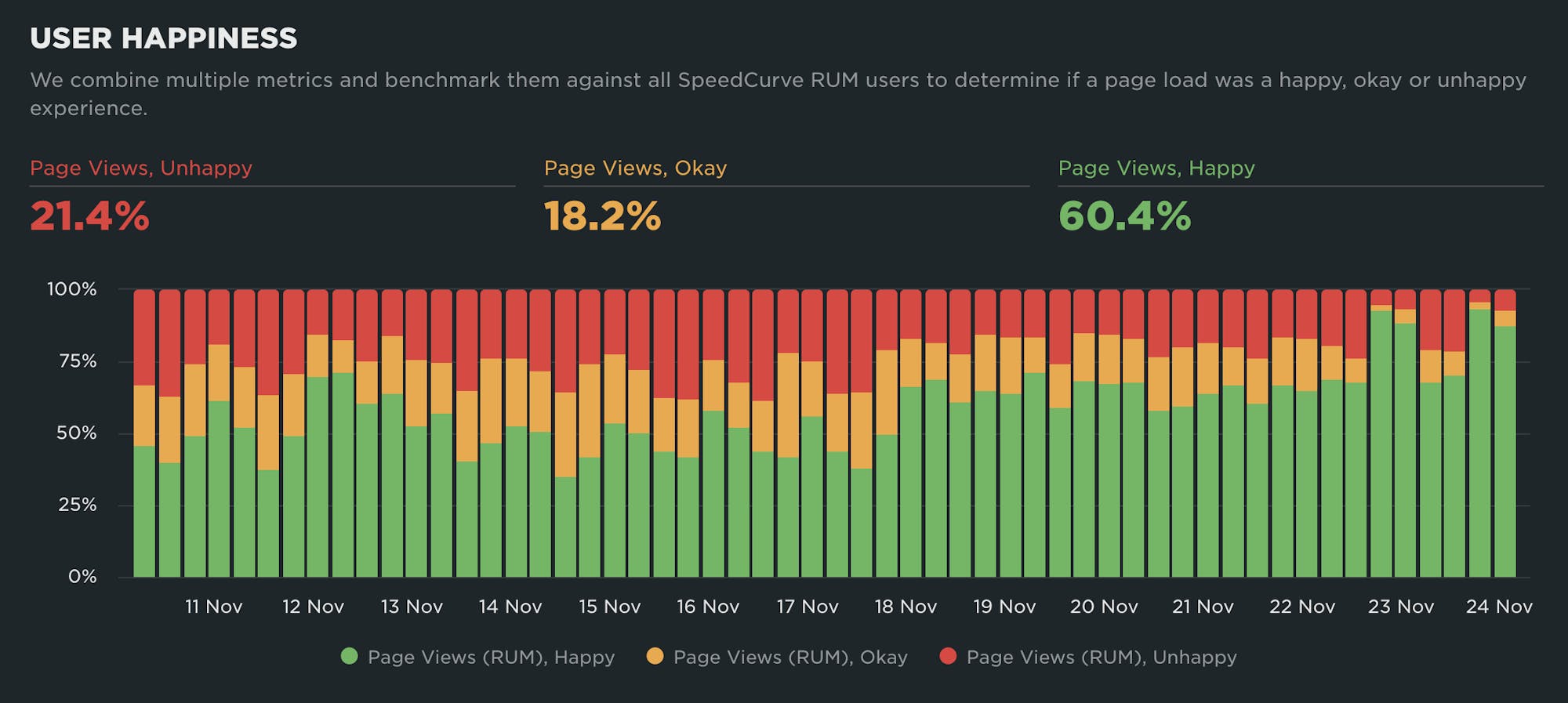

The goal of making websites faster is to make users happier. Since user happiness can't be measured directly, we launched the User Happiness metric more than six years ago. We've just released a fresh User Happiness update to reflect changes in web browsers and the overall web performance space!

Back in October 2019, we released our User Happiness metric with the goal of quantifying how a user might have felt while loading a page.

User Happiness is an aggregated metric that combines important user experience signals, gathered with real user monitoring (RUM). To create the User Happiness algorithm, we picked metrics and thresholds that we felt reflected the overall user experience: pages that loaded slowly or lagged during interaction were more likely to make someone feel unhappy, whereas fast and snappy pages would keep users happy.

More than six years later, we've updated User Happiness to reflect changes in web browsers and the overall web performance space. Keep reading to learn more.

2025 Holiday Readiness Checklist (Page Speed Edition!)

Delivering a great user experience through the holiday season is a marathon, not a sprint. Here are 25 things you can do to make sure your site is fast and available every day, not just Black Friday.

Your design and development teams are working hard to attract users and turn browsers into buyers, with strategies like:

- High-resolution images and videos

- Geo-targeted campaigns and content

- Third-party tags for audience analytics and retargeting

However, all those strategies can take a toll on the speed and user experience of your pages – and each introduces the risk of introducing single points of failure (SPoFs).

Below we've curated 25 things you can do to keep your users happy throughout the holidays (and beyond). If you're scrambling to optimize your site before Black Friday, you still have time to implement some or all of these best practices. And if you're already close to being ready for your holiday code freeze, you can use this as a checklist to validate that you've ticked all the boxes on your performance to-do list.

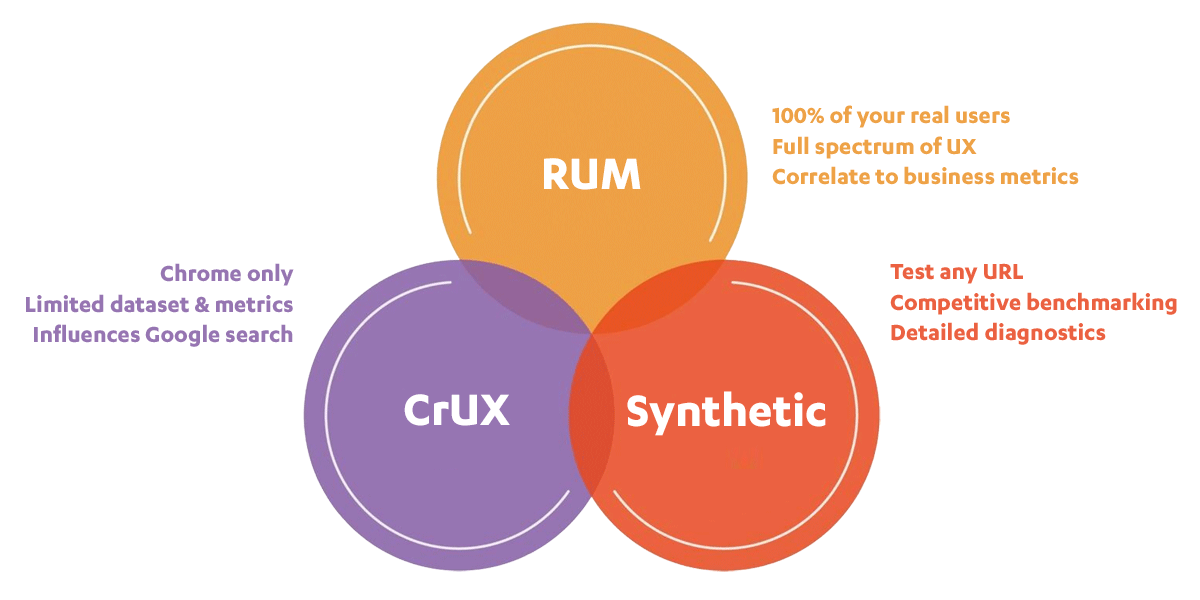

When to use CrUX, RUM, and Synthetic web performance monitoring

"Should I use synthetic monitoring, real user monitoring, or CrUX?" We hear this question a lot. It's important to know the strengths and limitations of each monitoring tool and what they’re best used for, so we don’t miss out on valuable insights.

This post includes:

- How synthetic and real user monitoring (RUM) work

- What is CrUX?

- Is CrUX a substitute for RUM?

- When and why to use each tool

- An obscure cheese metaphor

- Plus a quick survey question at the end!

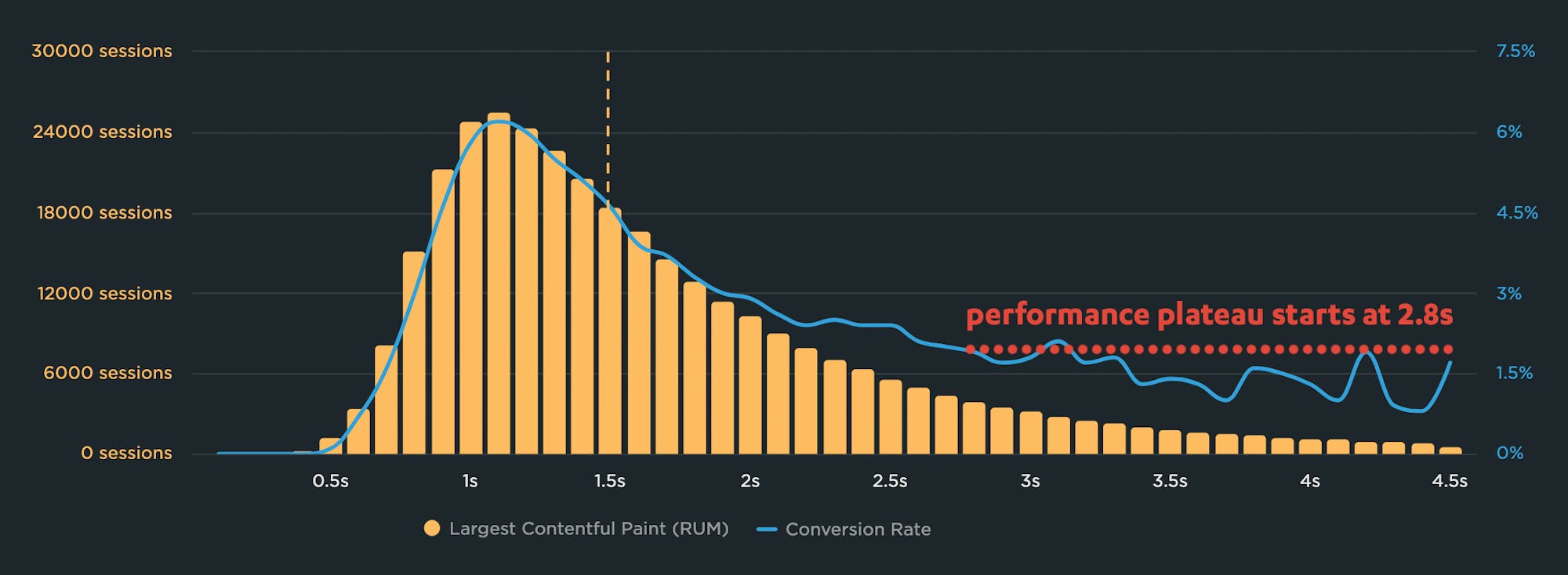

Why you need to know your site's performance plateau (and how to find it)

Have you ever wondered why your site got faster, but your business and user engagement metrics didn't improve? The answer might lie on the performance plateau.

Have you ever asked yourself these questions?

"I made my pages faster, but my business and user engagement metrics didn't change. WHY???"

"How do I know how fast my site should be?"

"How can I demonstrate the business value of page speed to people in my organization?"

The answers might lie with identifying and understanding the performance plateau for your site.

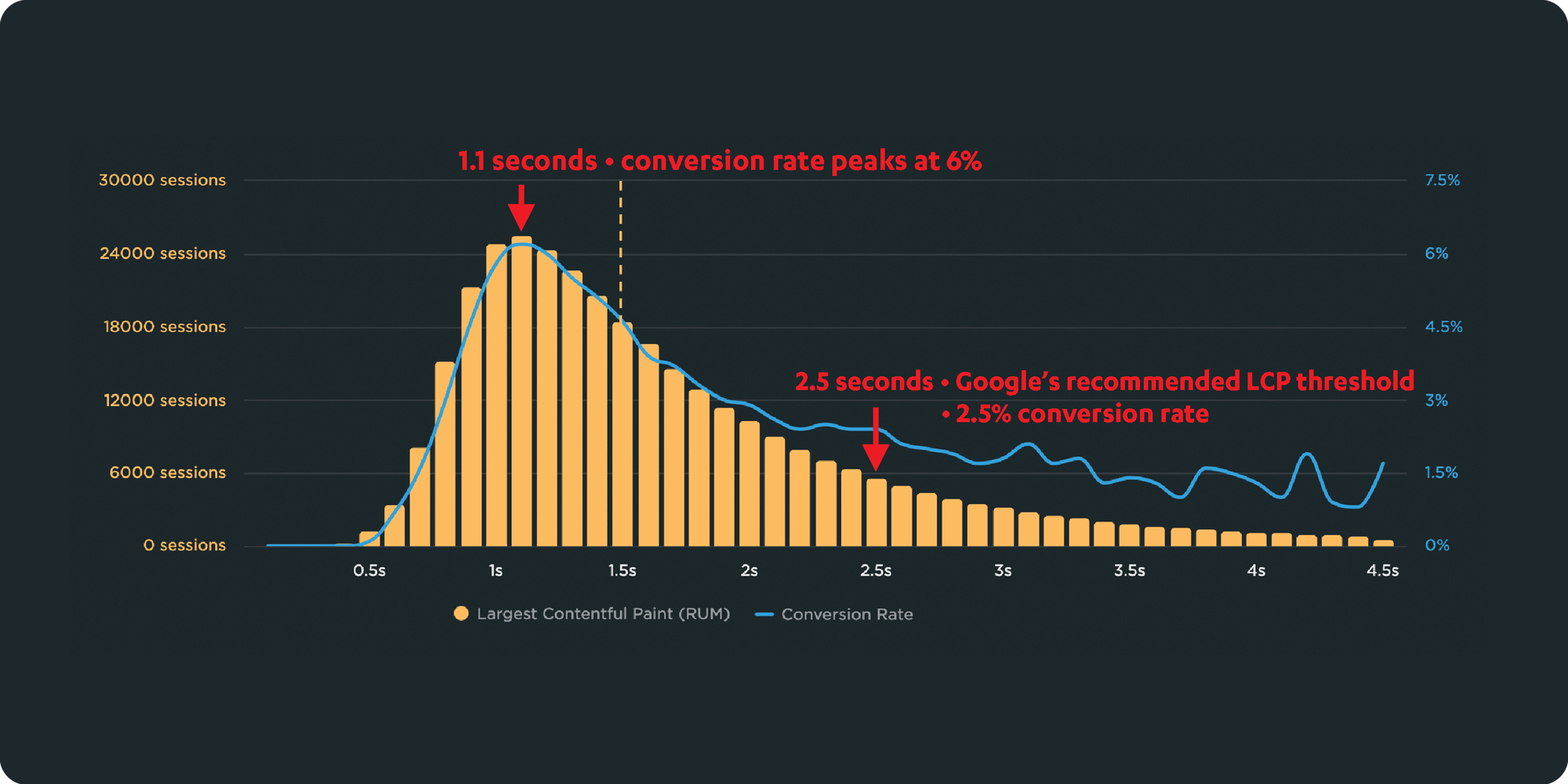

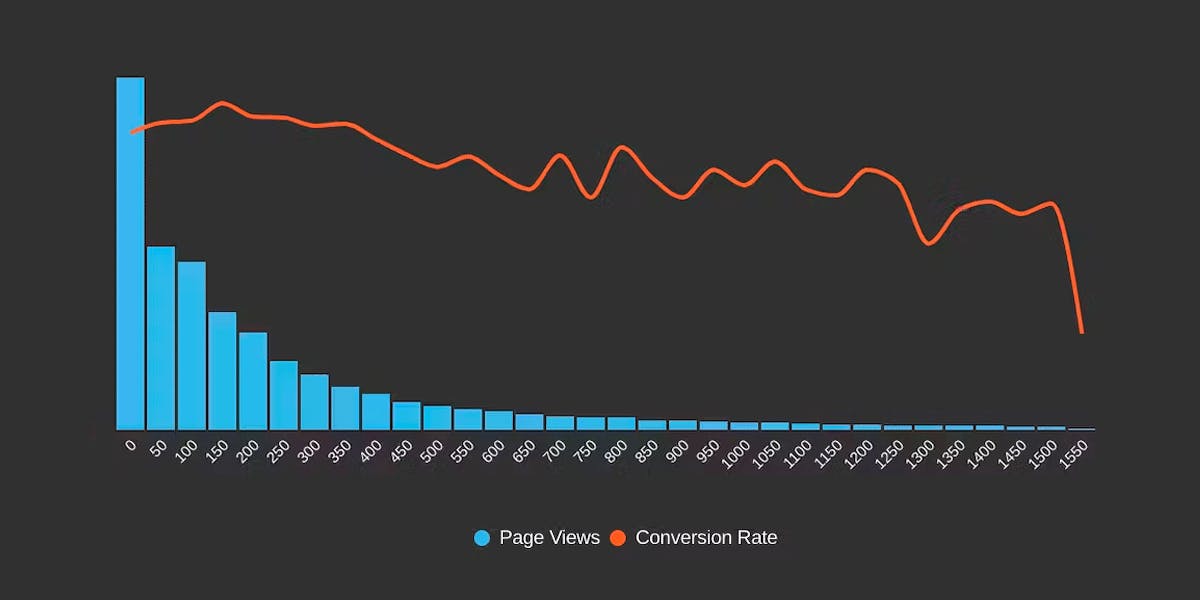

Correlation charts: Connect the dots between site speed and business success

If you could measure the impact of site speed on your business, how valuable would that be for you? Say hello to correlation charts – your new best friend.

Here's the truth: The business folks in your organization probably don't care about page speed metrics. But that doesn't mean they don't care about page speed. It just means you need to talk with them using metrics they already care about – such as conversion rate, revenue, and bounce rate.

That's why correlation charts are your new best friend.

Downtime vs slowtime: Which costs you more?

Comparing site outages to page slowdowns is like comparing a tire blowout to a slow leak. One is big and dramatic. The other is quiet and insidious. Either way, you end up stranded on the side of the road.

Downtime is horrifying for any company that uses the web as a vital part of its business (which is to say, most companies). Some of you may remember the Amazon outage of 2013, when the retail behemoth went down for 40 minutes. The incident made headlines, largely because those 40 minutes were estimated to have cost the company $5 million in lost sales.

Downtime makes headlines:

- 2015 – 12-hour Apple outage cost the company $25 million

- 2016 – 5-hour outage caused an estimated loss of $150 million for Delta Airlines

- 2019 – 14-hour outage cost Facebook an estimated $90 million

It's easy to see why these stories capture our attention. These are big numbers! No company wants to think about losing millions in revenue due to an outage.

Page slowdowns can cause as much damage as downtime

While Amazon and other big players take pains to avoid outages, these companies also go to great effort to manage the day-to-day performance – in terms of page speed and user experience – of their sites. That’s because these companies know that page slowdowns can cause at least as much damage as downtime.

Six things that slow down your site's UX (and why you have no control over them)

Have you ever looked at the page speed metrics – such as Start Render and Largest Contentful Paint – for your site in both your synthetic and real user monitoring tools and wondered "Why are these numbers so different?"

Photo by Freepik

Part of the answer is this: You have a lot of control over the design and code for the pages on your site, plus a decent amount of control over the first and middle mile of the network your pages travel over. But when it comes to the last mile – or more specifically, the last few feet – matters are no longer in your hands.

Your synthetic testing tool shows you how your pages perform in a clean lab environment, using variables – such as browser, connection type, even CPU power – that you've selected.

Your real user monitoring (RUM) tool shows you how your pages perform out in the real world, where they're affected by a myriad of variables that are completely outside your control.

In this post we'll review a handful of those performance-leaching culprits that are outside your control – and that can add precious seconds to the amount of time it takes for your pages to render for your users. Then we'll talk about how to use your monitoring tools to understand how your real people experience your site.

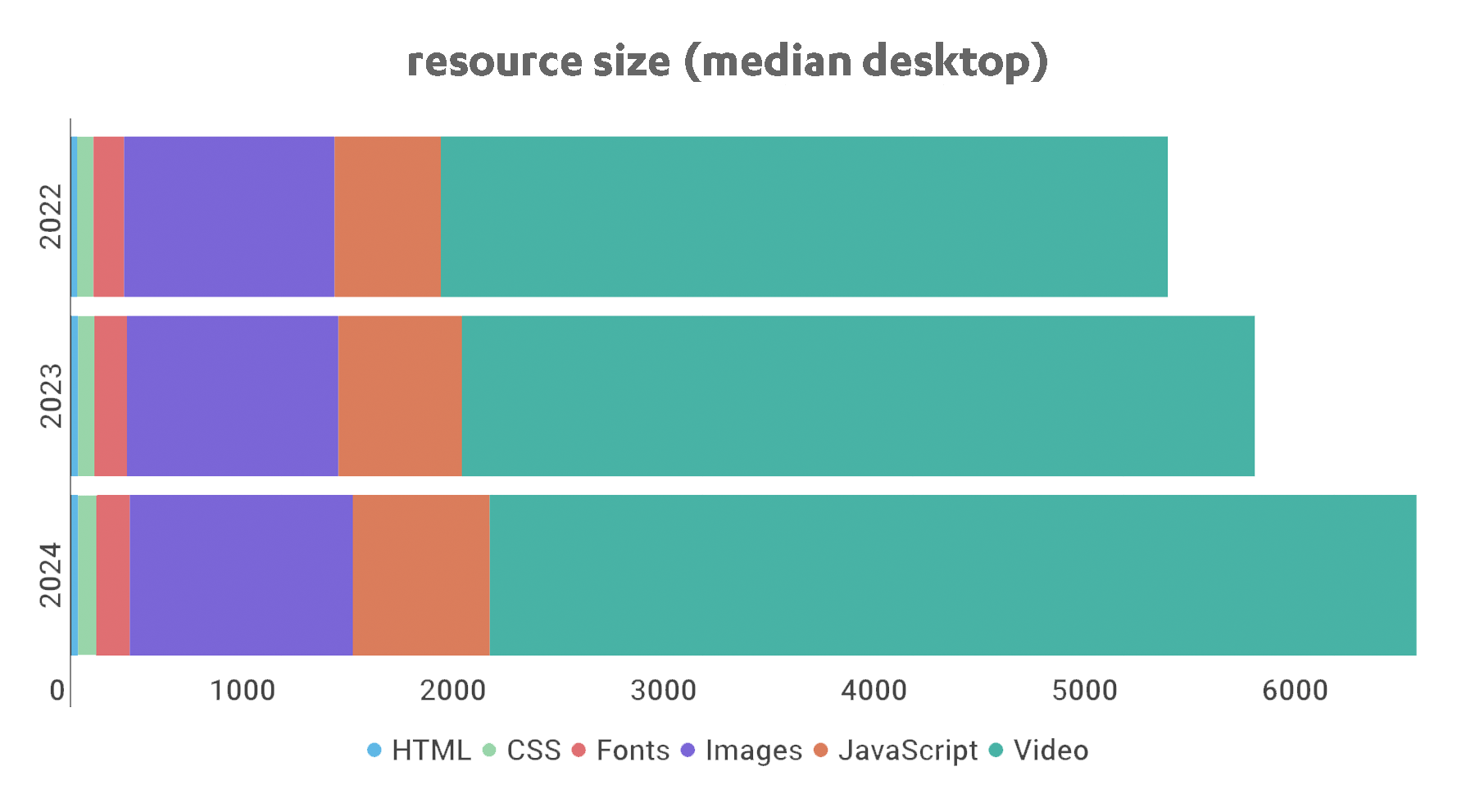

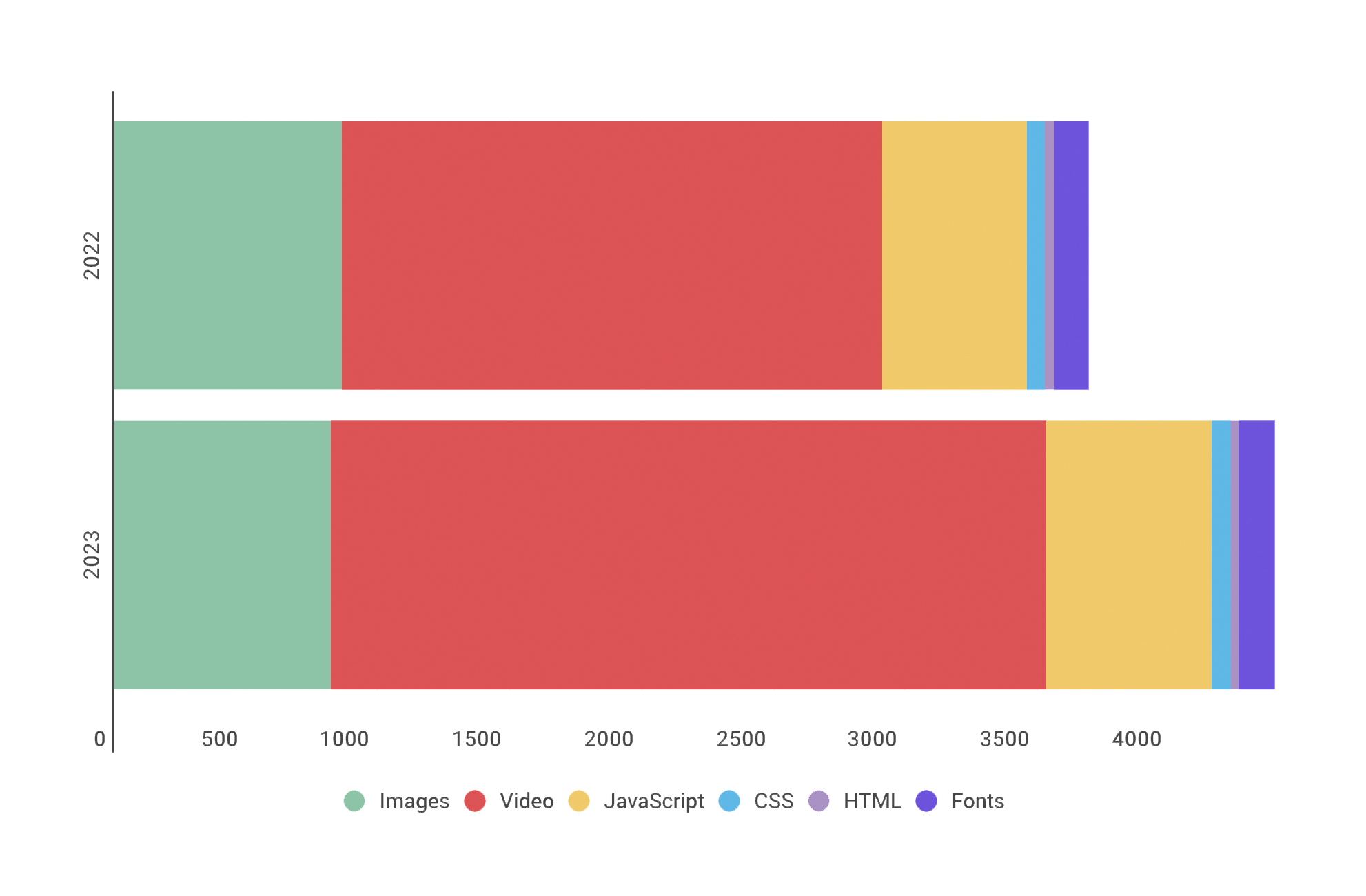

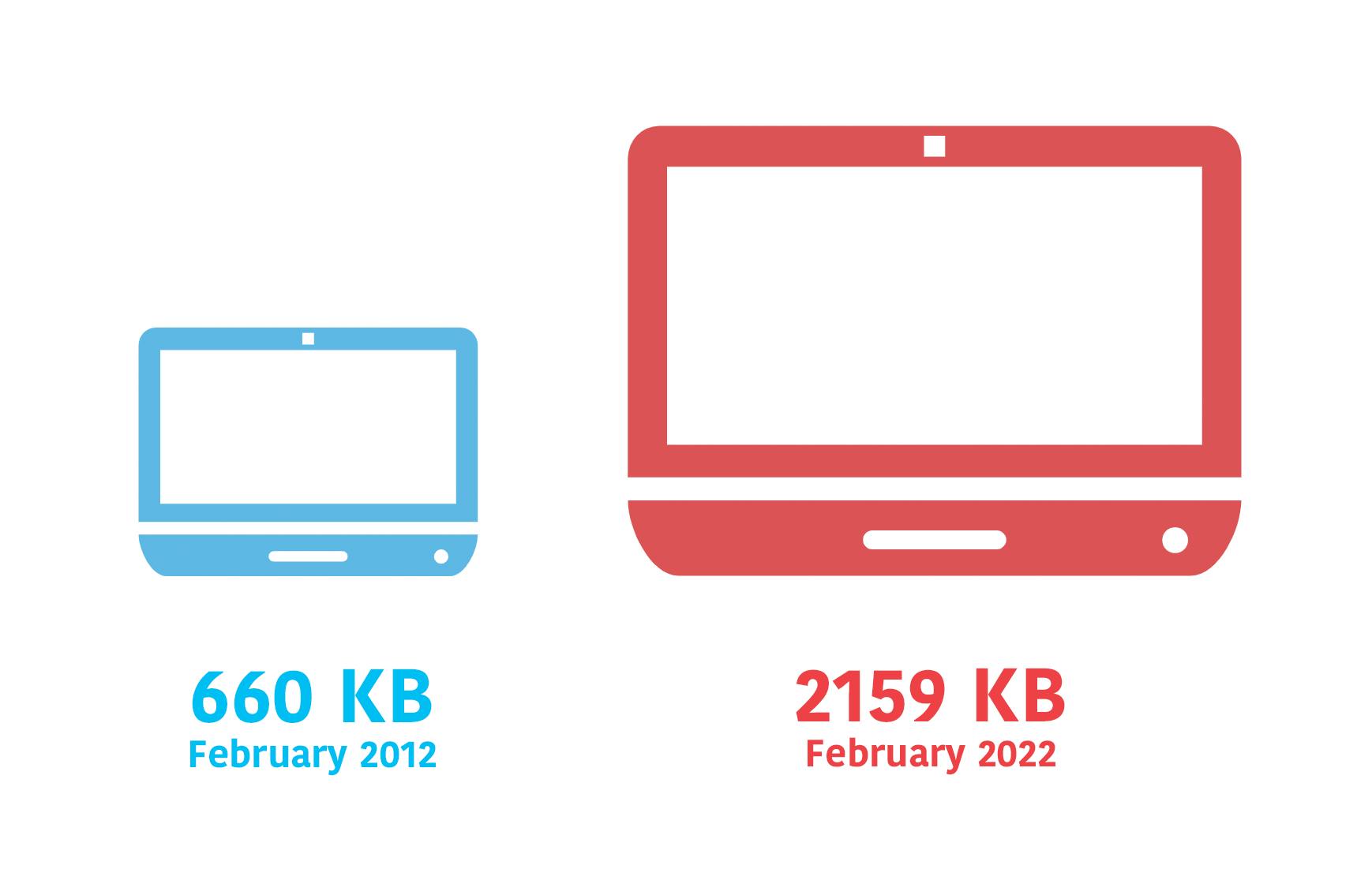

Page bloat update: How does ever-increasing page size affect your business and your users?

The median web page is 8% bigger than it was just one year ago. How does this affect your page speed, your Core Web Vitals, your search rank, your business, and most important – your users? Keep scrolling for the latest trends and analysis.

For almost fifteen years, I've been writing about page bloat, its impact on site speed, and ultimately how it affects your users and your business. You might think this topic would be exhausted by now, but every year I learn new things – beyond the overarching fact that pages keep getting bigger and more complex, as you can see in this chart, using data from the HTTP Archive:

In this post, we'll cover:

- How much pages have grown over the past year

- How page bloat hurts your business and – at the heart of everything – your users

- How page bloat affects Google's Core Web Vitals (and therefore SEO)

- If it's possible to have large pages that still deliver a good user experience

- Page size targets

- How to track page size and complexity

- How to fight regressions

Hello INP! Here's everything you need to know about the newest Core Web Vital

After years of development and testing, Google has added Interaction to Next Paint (INP) to its trifecta of Core Web Vitals – the performance metrics that are a key ingredient in its search ranking algorithm. INP replaces First Input Delay (FID) as the Vitals responsiveness metric.

Not sure what INP means or why it matters? No worries – that's what this post is for. :)

- What is INP?

- Why has it replaced First Input Delay?

- How does INP correlate with user behaviour metrics, such as conversion rate?

- What you need to know about INP on mobile devices

- How to debug and optimize INP

And at the bottom of this post, we'll wrap thing up with some inspiring case studies from companies that have found that improving INP has improved sales, pageviews, and bounce rate.

Let's dive in!

The psychology of site speed and human happiness

In the fourteen years that I've been working in the web performance industry, I've done a LOT of research, writing, and speaking about the psychology of page speed – in other words, why we crave fast, seamless online experiences. In fact, the entire first chapter of my book, Time Is Money (reprinted here courtesy of the good folks at O'Reilly), is dedicated to the subject.

I recently shared some of my favourite research at Beyond Tellerrand (video here) and thought it would be fun to round it up in a post. Here we're going to cover:

- Why time is a crucial (and often neglected) usability factor

- How we perceive wait times

- Why our memory is unreliable

- How the end of an experience has a disproportionate effect on our perception

- How fast we expect pages to be (and why)

- "Flow" and what it means in terms of how we use the web

- How delays hurt our productivity

- What we can learn from measuring "web stress"

- How slowness affects our entire perception of a brand

There's a lot of fascinating material to cover, so let's get started!

Mobile INP performance: The elephant in the room

Earlier this year, when Google announced that Interaction to Next Paint (INP) will replace First Input Delay (FID) as the responsiveness metric in Core Web Vitals in *gulp* March of 2024, we had a lot to say about it. (TLDR: FID doesn't correlate with real user behavior, so we don't endorse it as a meaningful metric.)

Our stance hasn't changed much since then. For the most part, everyone agrees the transition from FID to INP is a good thing. INP certainly seems to be capturing interaction issues that we see in the field.

However, after several months of discussing the impending change and getting a better look at INP issues in the wild, it's hard to ignore the fact that mobile stands out as the biggest INP offender by a wide margin. This doesn't get talked about as much as it should, so in this post we'll explore:

- The gap between "good" INP for desktop vs mobile

- Working theories as to why mobile INP is so much poorer than desktop INP

- Correlating INP with user behavior and business metrics (like conversion rate)

- How you can track and improve INP for your pages

Does Interaction to Next Paint actually correlate to user behavior?

Earlier this year, Google announced that Interaction to Next Paint (INP) is no longer an experimental metric. INP will replace First Input Delay (FID) as a Core Web Vital in March of 2024.

Now that INP has arrived to dethrone FID as the responsiveness metric in Core Web Vitals, we've turned our eye to scrutinizing its effectiveness. In this post, we'll look at real-world data and attempt to answer: What correlation – if any – does INP have with actual user behavior and business metrics?

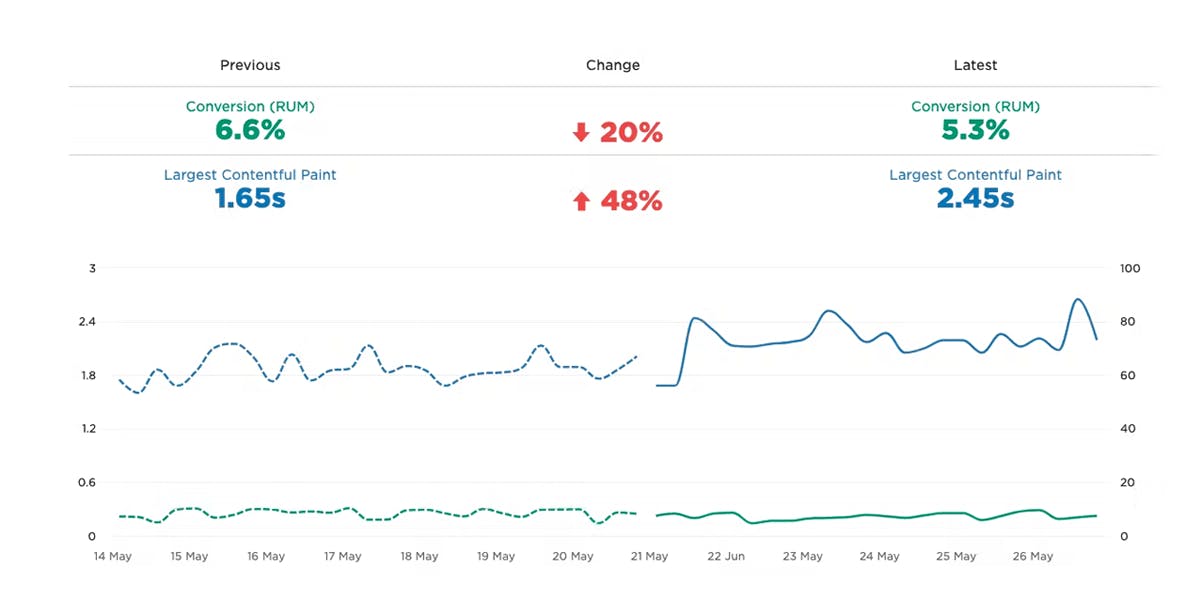

Exploring performance and conversion rates just got easier

Demonstrating the impact of performance on your users – and on your business – is one of the best ways to get your company to care about the speed of your site.

Tracking goal-based metrics like conversion rate alongside performance data can give you richer and more compelling insights into how the performance of your site affects your users. This concept is not new by any means. In 2010, the Performance and Reliability team I was fortunate enough to lead at Walmartlabs shared our findings around the impact of front-end times on conversion rates. (This study and a number of other case studies tracked over the years can be found at WPOstats.)

Setting up conversion tracking in SpeedCurve RUM is fairly simple and definitely worthwhile. This post covers:

- What is a conversion?

- How to track conversions in SpeedCurve

- Using conversion data with performance data for maximum benefit

- Conversion tracking and user privacy

What is page bloat? And how is it hurting your business, your search rank, and your users?

For more than ten years, I've been writing about page bloat, its impact on site speed, and ultimately how it affects your users and your business. You might think that this topic would be played out by now, but every year I learn new things – beyond the overarching fact that pages keep getting bigger and more complex, as you can see in this chart, using data from the HTTP Archive.

In this post, we'll cover:

- How much pages have grown over the past year

- How page bloat hurts your business and – at the heart of everything – your users

- How page bloat affects Google's Core Web Vitals (and therefore SEO)

- If it's possible to have large pages that still deliver a good user experience

- Page size targets

- How to track page size and complexity

- How to fight regressions

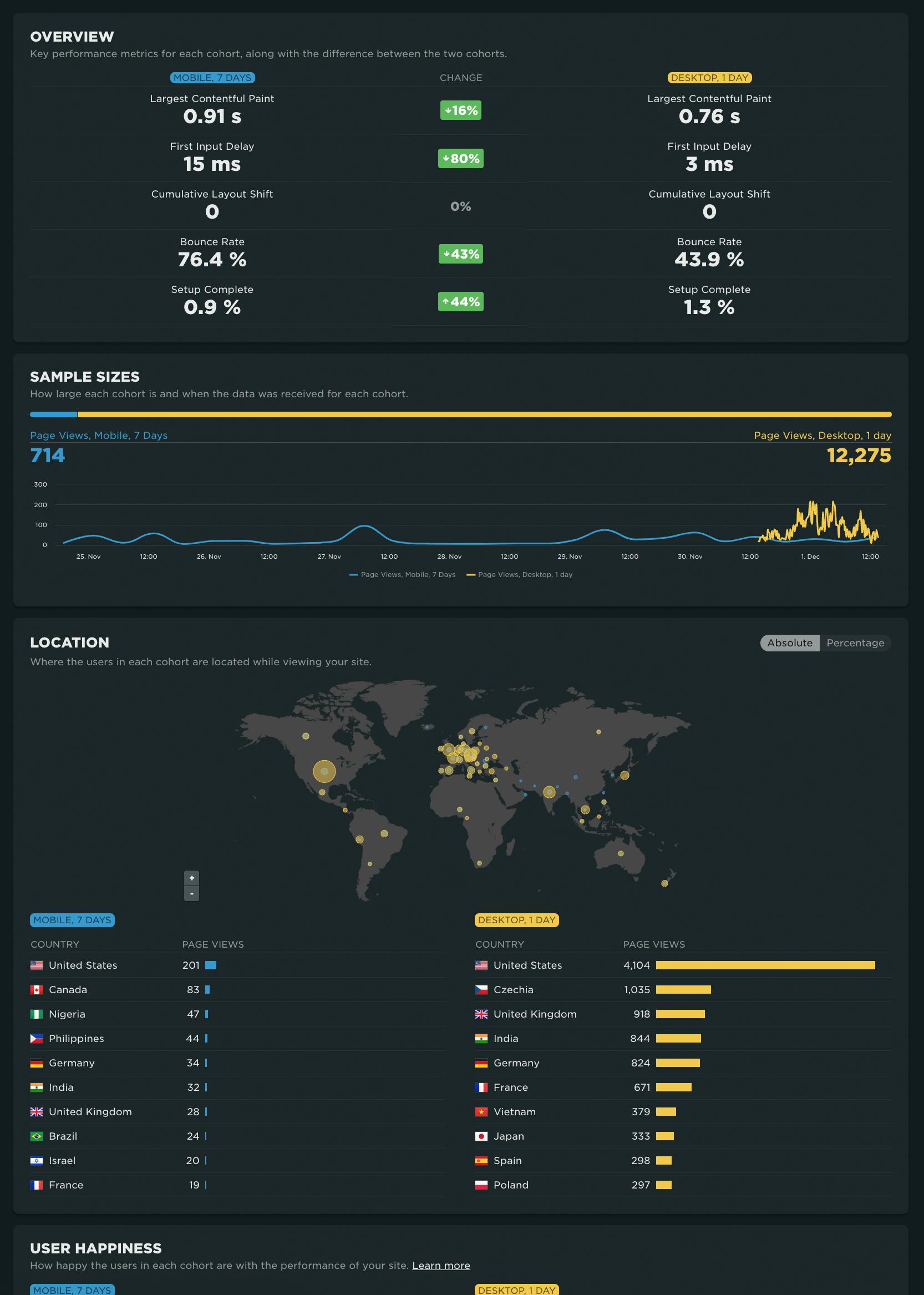

NEW! RUM Compare dashboard

Exploring real user (RUM) data can be a hugely enlightening process. It uncovers things about your users and their behavior that you never might have suspected. That said, it's not uncommon to spend precious time peeling back the layers of the onion, only to find false positives or uncertainty in all that data.

At SpeedCurve, we believe a big part of our job is making your job easier. This was a major driver behind the Synthetic Compare dashboard we released last year, which so many of you given us great feedback on.

As you may have guessed, since then we've been hard at work coming up with the right way to explore and compare your RUM datasets using a similar design pattern. Today, we are thrilled to announce your new RUM Compare dashboard!

With your RUM Compare dashboard, you can easily generate side-by-side comparisons for any two cohorts of data. Some of the many reasons you might want to do this include:

- Improve Core Web Vitals by identifying the tradeoffs between pages that have different layout and construction

- Triage a performance regression related to the latest change or deployment to your site by looking at a before/after comparison

- Explore and compare different out-of-the-box cohorts, such as device types, geographies, page labels, and more

- Analyze A/B tests or experiments to understand which had the most impact on user behavior, as well as performance

- Optimize your funnel by understanding differences between users that convert or bounce from your site and users who don't

- Evaluate CDN performance by exploring the impact of time-of-day traffic patterns

Let's take a tour...

Element Timing: One true metric to rule them all?

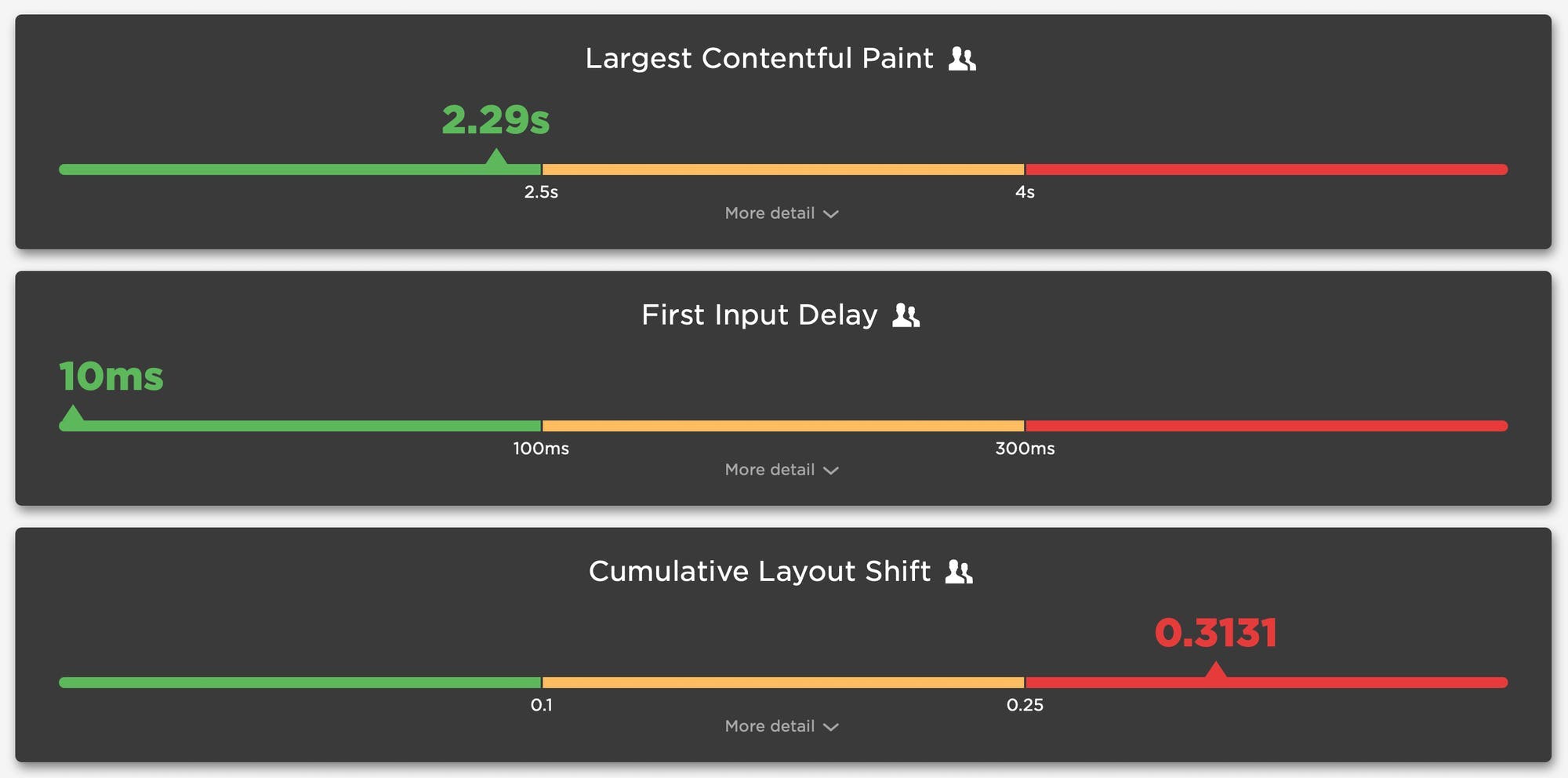

One of the great things about Google's Core Web Vitals is that they provide a standard way to measure our visitors’ experience. Core Web Vitals can answer questions like:

- When was the largest element displayed? Largest Contentful Paint (LCP) measures when the largest visual element (image or video) finishes rendering.

- How much did the content move around as it loads? Cumulative Layout Shift (CLS) measures the visual stability of a page.

- How responsive was the page to visitors' actions? First Input Delay (FID) measures how quickly a page responds to a user interaction, such as a click/tap or keypress.

Sensible defaults, such as Core Web Vitals, are a good start, but one pitfall of standard measures is that they can miss what’s actually most important.

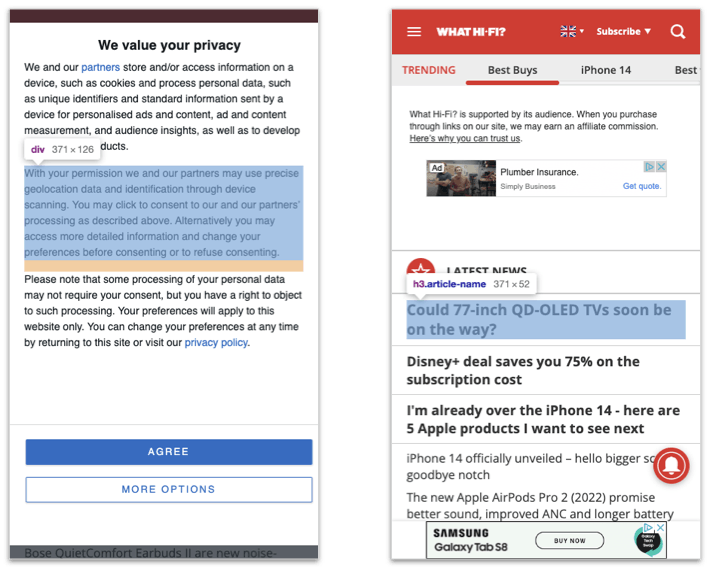

The (potential) problems with Largest Contentful Paint

Largest Contentful Paint (LCP) makes the assumption that the largest visible element is the most important content from the visitors’ perspective; however, we don’t have a choice about which element it measures. LCP may not be measuring the most appropriate – or even the same – element for each page view.

The LCP element can vary for first-time vs repeat visitors

In the case of a first-time visitor, the largest element might be a consent banner. On subsequent visits to the same page, the largest element might be an image for a product or a photo that illustrates a news story.

The screenshots from What Hifi (a UK audio-visual magazine) illustrate this problem. When the consent banner is shown, then one of its paragraphs is the LCP element. When the consent banner is not shown, an article title becomes the LCP element. In other words, the LCP timestamp varies depending on which of these two experiences the visitor had!

What Hi Fi with and without the consent banner visible

What Hi Fi with and without the consent banner visible

Ten years of page bloat: What have we learned?

(See our more recent page growth post: What is page bloat? And how is it hurting your business, search rank, and users?)

I've been writing about page size and complexity for years. If you've been working in the performance space for a while and you hear me start to talk about page growth, I'd forgive you if you started running away. ;)

But pages keep getting bigger and more complex year over year – and this increasing size and complexity is not fully mitigated by faster devices and networks, or by our hard-working browsers. Clearly we need to keep talking about it. We need to understand how ever-growing pages work against us. And we need to have strategies in place to understand and manage our pages.

In this post, we'll cover:

- How big are pages today versus ten years ago?

- How does page bloat hurt your business?

- How does page bloat affect other metrics, such as Google's Core Web Vitals?

- Is it possible to have large pages that deliver a good user experience?

- What can we do to manage our pages and fight regression?

SEO and web performance: What to measure and how to optimize

Chances are, you're here because of Google's update to its search algorithm, which affects both desktop and mobile, and which includes Core Web Vitals as a ranking factor. You may also be here because you've heard about the most recent potential candidates for addition to Core Web Vitals, which were just announced at Chrome Dev Summit.

A few things are clear:

- Core Web Vitals, as a premise, are here to stay for a while.

- The metrics that comprise Web Vitals are still evolving.

- These metrics will (I think) always be in a state of evolution. That's a good thing. We need to do our best to stay up to date – not just with which metrics to track, but also with what they measure and why they're important.

If you're new to Core Web Vitals, this is a Google initiative that was launched in early 2020. Web Vitals is (currently) a set of three metrics – Largest Contentful Paint, First Input Delay, and Cumulative Layout Shift – that are intended to measure the loading, interactivity, and visual stability of a page.

When Google talks, people listen. I talk with a lot of companies and I can attest that, since Web Vitals were announced, they've shot to the top of many people's list of things to care about. But Google's prioritization of page speed in search ranking isn't new, even for mobile. As far back as 2013, Google announced that pages that load slowly on mobile devices would be penalized in mobile search.

Keep reading to find out:

- How much does web performance matter when it comes to SEO?

- Which performance metrics should you focus on for SEO?

- What can you do to make your pages faster for SEO purposes?

- What are some of the common issues that can hurt your Web Vitals?

- How can you track performance for SEO?