What is page bloat? And how is it hurting your business, your search rank, and your users?

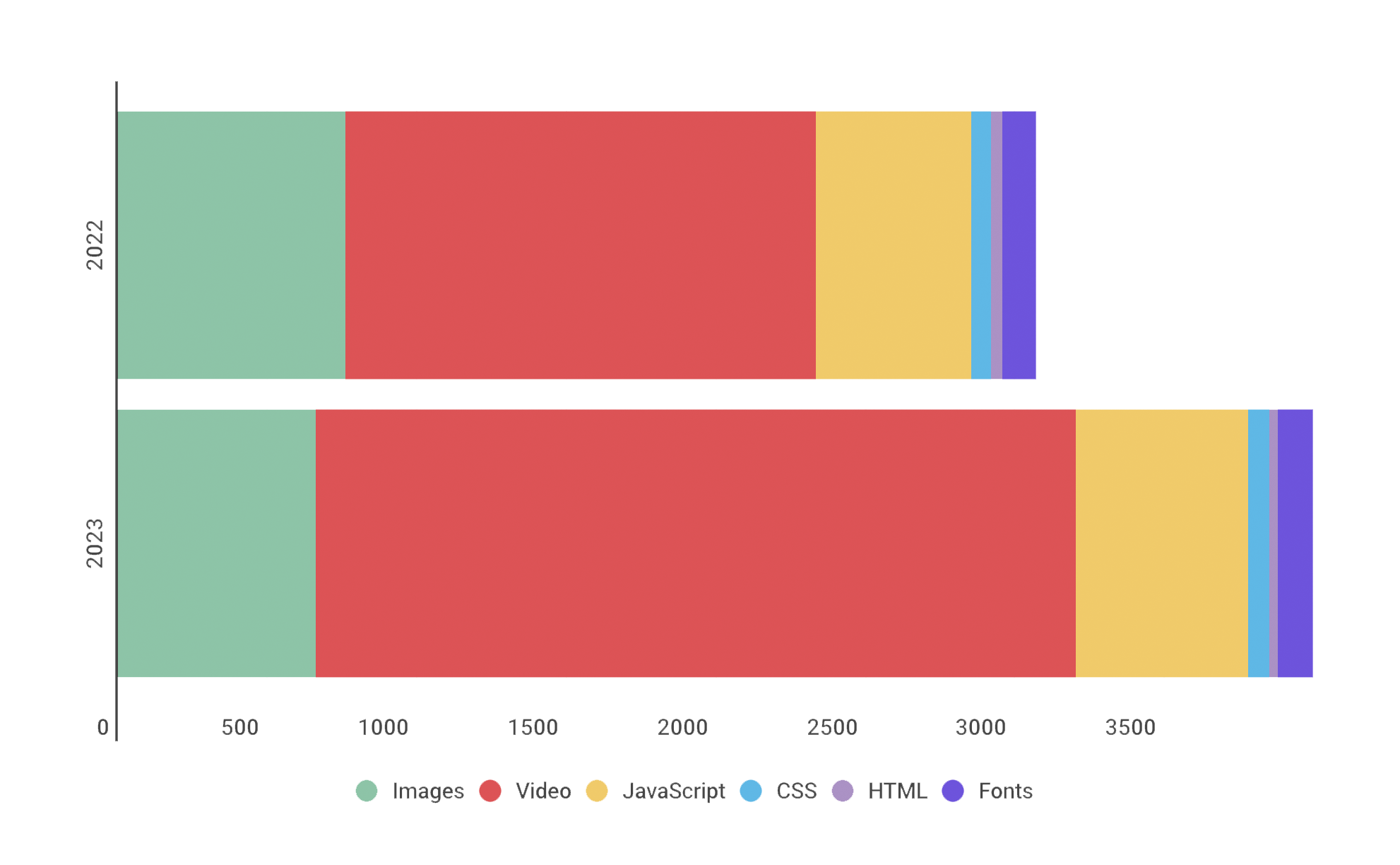

For more than ten years, I've been writing about page bloat, its impact on site speed, and ultimately how it affects your users and your business. You might think that this topic would be played out by now, but every year I learn new things – beyond the overarching fact that pages keep getting bigger and more complex, as you can see in this chart, using data from the HTTP Archive.

In this post, we'll cover:

- How much pages have grown over the past year

- How page bloat hurts your business and – at the heart of everything – your users

- How page bloat affects Google's Core Web Vitals (and therefore SEO)

- If it's possible to have large pages that still deliver a good user experience

- Page size targets

- How to track page size and complexity

- How to fight regressions

What do we mean when we talk about page size?

When we talk about page size, we're referring to overall page weight and complexity. This includes:

- Size – Total page weight in bytes. Size matters, especially to mobile users who have limited and/or metered data.

- Resources – Total number of resources (HTML, images, fonts, etc.) on the page. The more resources, the greater the complexity and the increased likelihood of rendering delays and blockages.

- HTML – Typically the smallest resource on the page, HTML's performance risk is usually negligible. Having said that, I recently did some digging into a page where the total HTML size jumped dramatically because of a bunch of inline JavaScript, which led to rendering delays, so keeping an eye on HTML size is still a good idea.

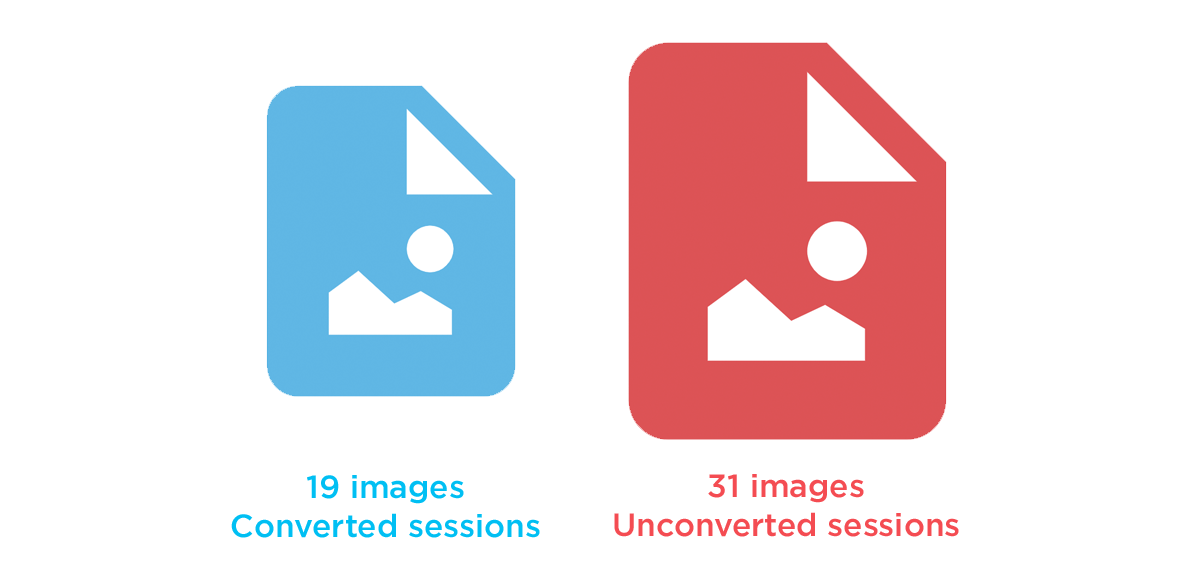

- Images – Often the greatest contributor to page bloat. Looking at the 90th percentile of the distribution of page weight, images account for a whopping 5.7 MB of a roughly 8.2 MB page. In other words, images comprised almost 75% of the total page weight. And if that already wasn’t enough, the number of images on a page has been linked to lower conversion rates on retail sites. (More on that later.)

- Video – For the first time in the many years that I've been covering the topic of page growth, I'm going to focus on the volume of video that pages contain. That's because video seems to have had a surge in growth, which should be a cause of concern for anyone who cares about metrics like Largest Contentful Paint, which measures the largest visual element on a page – including videos. (More on that below.)

- JavaScript – A page can have a relatively low JS weight but still suffer from JS-inflicted performance problems. Even a single 100 KB third-party script can wreak havoc with your page. The more scripts on your page, the greater the risk. It’s not enough to focus solely on blocking JavaScript. It’s possible for your pages to contain zero blocking resources and still have less-than-optimal performance because of how your JavaScript is rendered. That’s why it’s so important to understand CPU usage on your pages, because JavaScript consumes more CPU than all other browser activities combined. While JavaScript blocks the CPU, the browser can’t respond to user input. This creates what’s commonly called “jank” – that annoying feeling of jittery, unstable page rendering.

- CSS – Like JavaScript, CSS doesn’t have to be bulky to cause problems. Poorly executed stylesheets can create a host of performance problems, ranging from stylesheets taking too long to download and parse, to improperly placed stylesheets that block the rest of the page from rendering. And, similar to JavaScript, more CSS files equals more potential trouble.

How does page bloat hurt Core Web Vitals?

Core Web Vitals are a Google search ranking factor. Given that Google continues to dominate search usage, you should care about Vitals alongside the other metrics you should be tracking.

Page bloat can have a huge impact on your Vitals:

- Cumulative Layout Shift – Excessive resources can contribute to a poorer CLS score, as more elements shift on the page.

- Largest Contentful Paint – LCP measures when the largest visual element (image or video) in the viewport finishes rendering. Heavier visual elements can take much longer to render, especially videos. If you're serving huge videos that take several seconds to fully render, it could be hurting your LCP times.

- Interaction to Next Paint & Total Blocking Time – Excessive and/or non-performant JavaScript can hurt interactivity metrics, like INP, FID, and TBT. The more scripts on your pages, the greater the risk.

How does page bloat hurt your business?

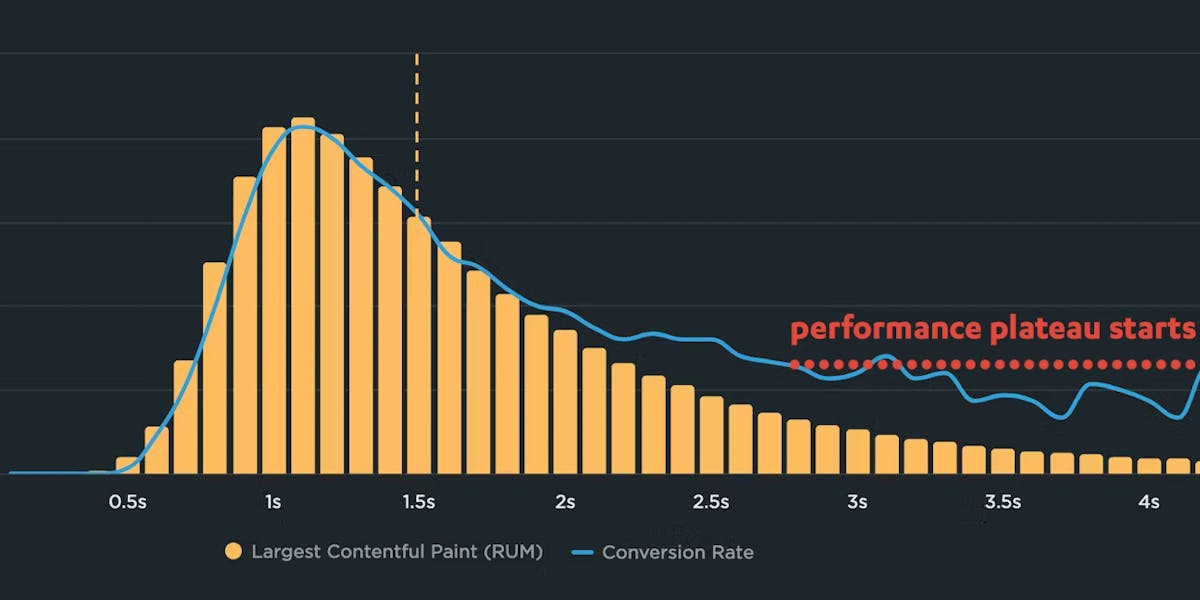

A Google machine-learning study I helped with a few years ago found a few strong predictors of whether or not a page resulted in a conversion, ranked in the following order:

- The total number of page elements was the single greatest predictor of conversions.

- The number of images on the page was the second greatest predictor.

- The more scripts there were in a series of pages in a session, the less likely that session was to convert.

Image size is another issue, as excessive image weight hurts your SEO ranking in Google Image Search. Given that Image Search comprises upwards of 26% of Google searches, this is something you should care about. (You can dive deeper into image optimization and SEO tips in this article in Google Search Central.)

How does page bloat hurt your visitors?

In his excellent series of blog posts, The Performance Inequality Gap, Alex Russell makes a compelling case that serving huge pages is an "ethical crisis for front end":

Meanwhile, sites continue to send more script than is reasonable for 80+% of the world's users, widening the gap between the haves and the have-nots...

Essential public services are now delivered primarily through digital channels in many countries. This means what the frontend community celebrates and promotes has a stochastic effect on the provision of those services — which leads to an uncomfortable conversation because, taken as a whole, it isn't working.

Pervasively poor results are part of why responsible public sector organisations are forced to develop HTML-first, progressive enhancement guidance in stark opposition to the "frontend consensus".

This is an indictment: modern frontend's fascination with towering piles of JavasScript complexity is not delivering better experiences for most users.

HTTP Archive research: Background and caveats

Before we get into the analysis, some context:

- The numbers cited below all come from the HTTP Archive. I looked at the top 1M sites and top 1K sites, focusing on median and 90th percentile numbers. This is to try to understand how a "typical" page might perform, as well as pages in the "longtail". (It's super important to understand longtail performance. If 10% of your visitors are suffering, that's a lot of users!)

- These numbers should not be taken as a benchmark for your own site. You haven't necessarily achieved anything great if your pages are smaller than this, nor have you failed by default if your pages are bigger.

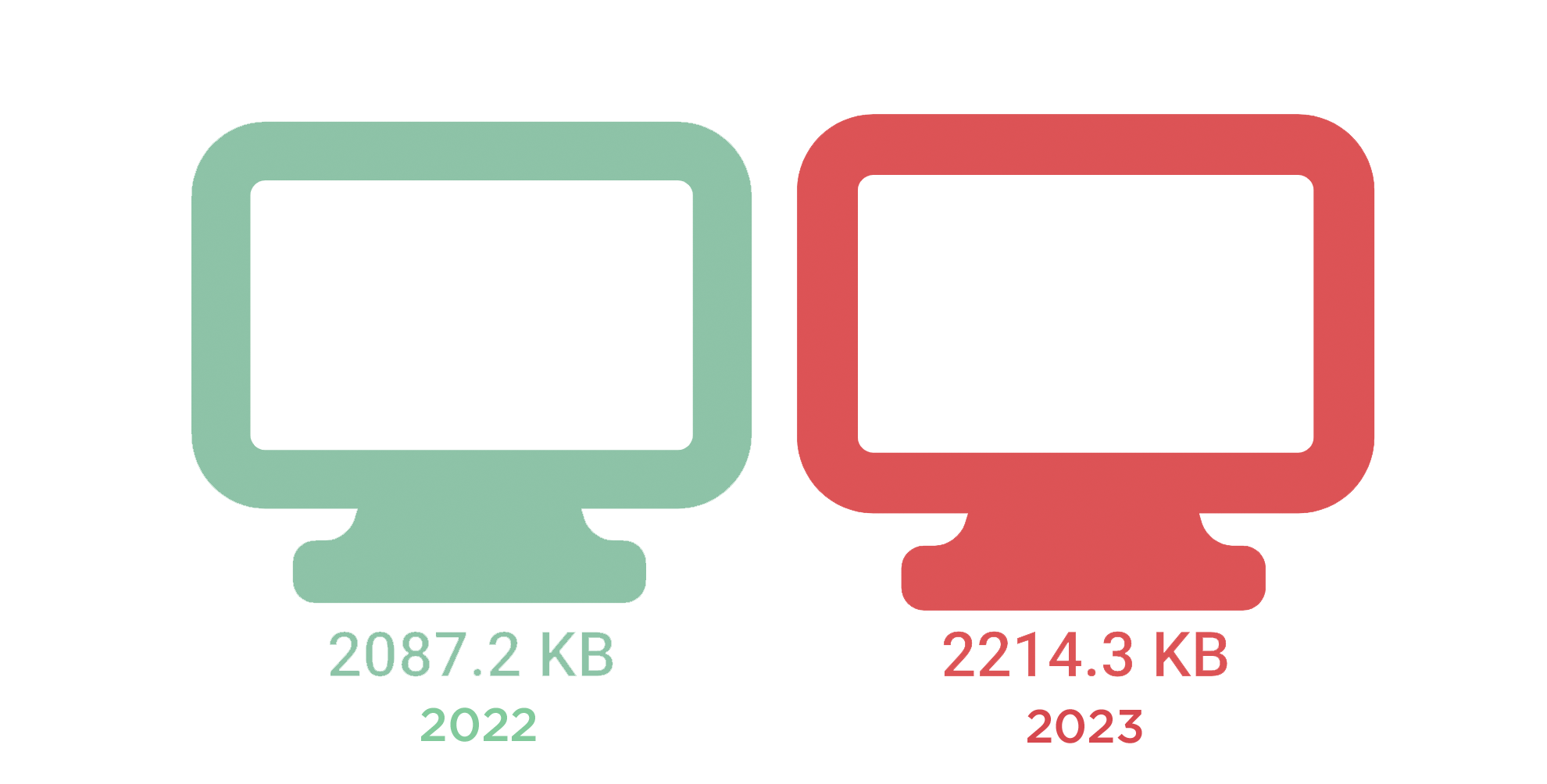

Desktop: The median page has grown 6% in one year

If you look at the year-over-year increase from 2087.2 to 2214.3 KB, you're forgiven if your initial reaction is "that's not too bad". But 6% growth is significant. At that rate, the median page will be close to 3 MB within five years. That's not trivial.

Even if you consider this growth not too worrisome, it's important to keep in mind that it's just an aggregated number, which masks the more dramatic increases in specific resource types, such as JavaScript and video.

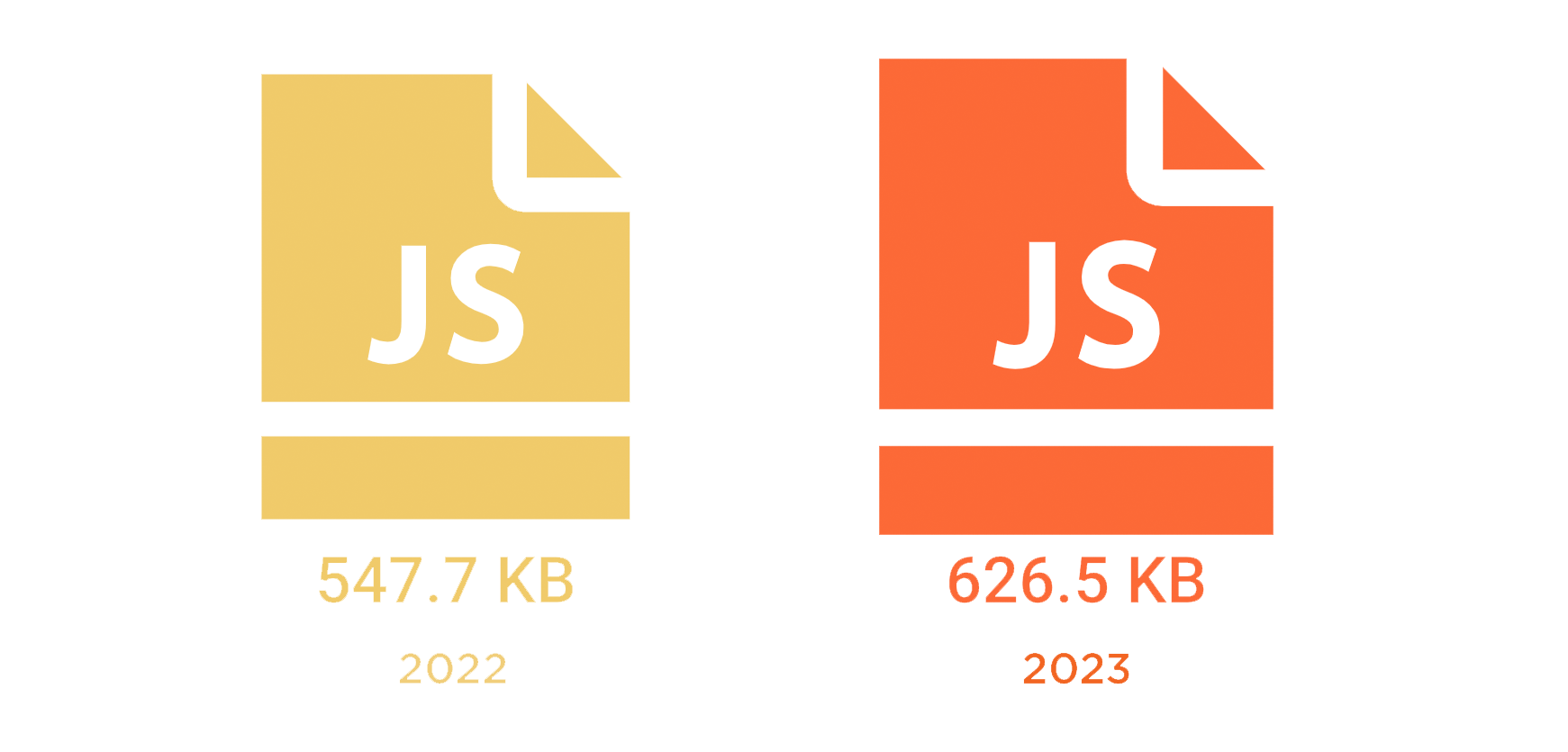

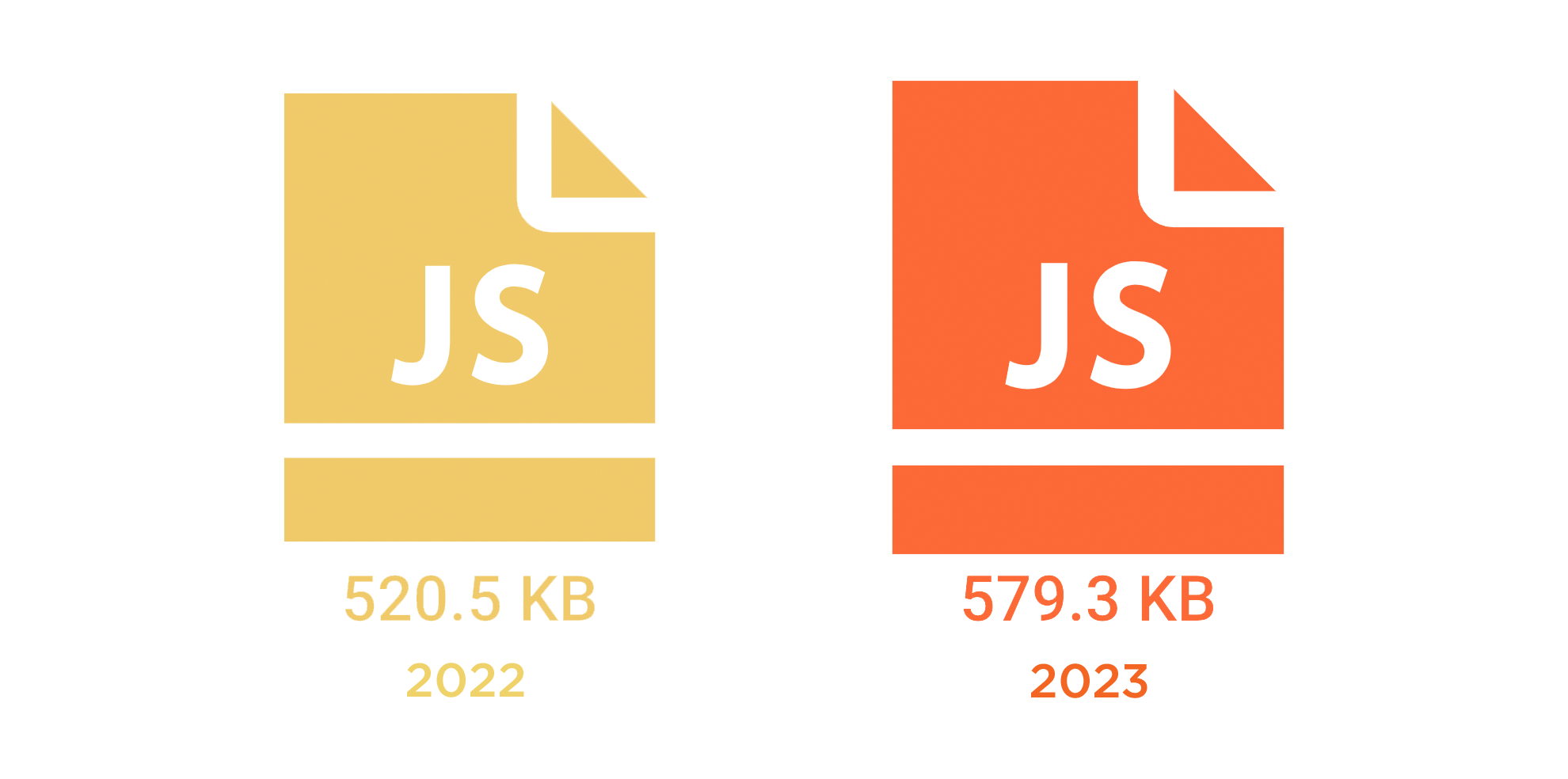

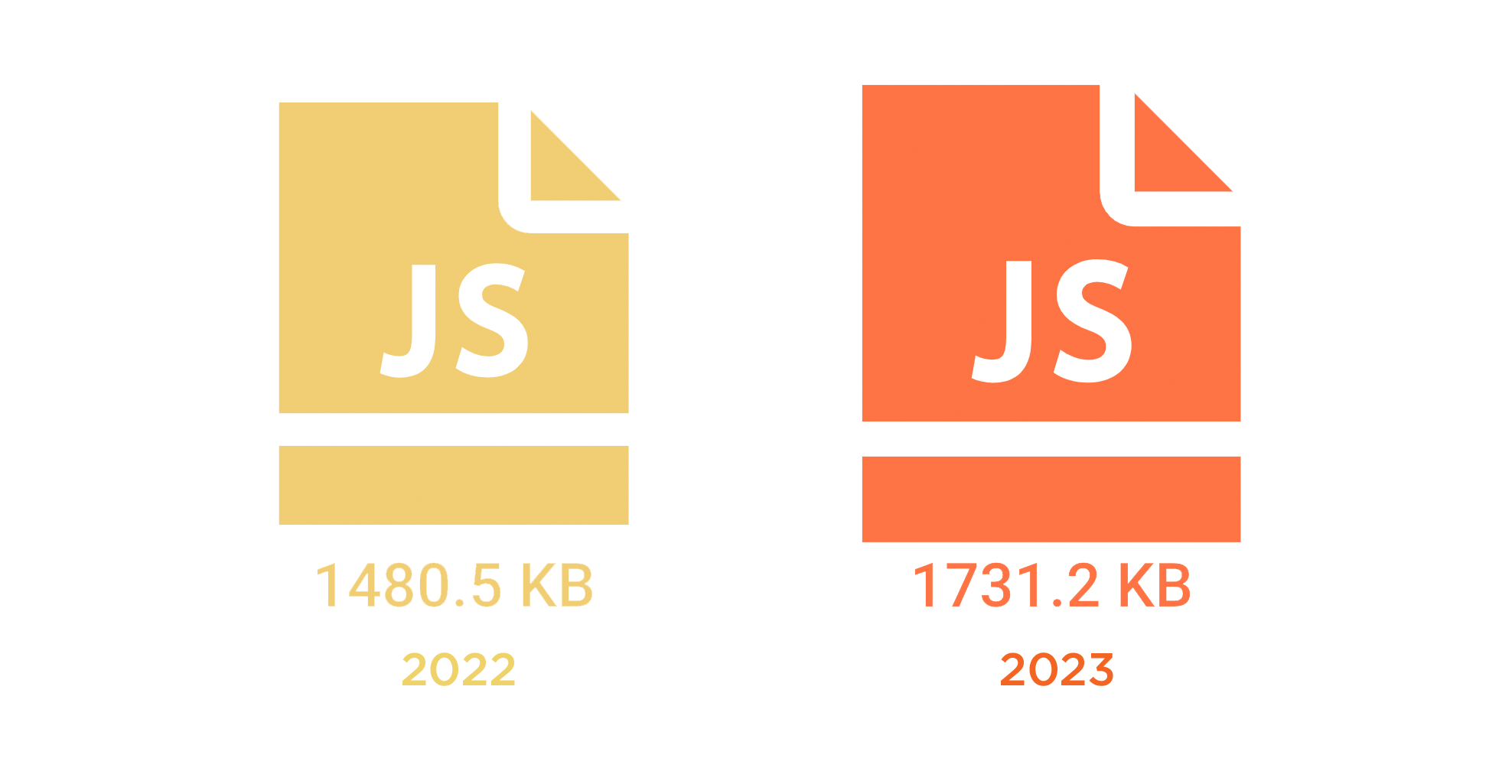

Desktop: Median JavaScript weight increased by 14%

Almost 627 KB of JavaScript – spread across the 24 JS requests contained on the median page – is a lot of JavaScript.

Each script on your page represents a potential single point of failure, especially if the script is render-blocking or has an excessive Long Tasks time. The more scripts on your page, the greater the performance risk. Even if the script doesn't block the page from rendering, excessive and unoptimized JavaScript can hurt interactivity metrics like Interaction to Next Paint and Total Blocking Time.

Each script on your page represents a potential single point of failure, especially if the script is render-blocking or has an excessive Long Tasks time. The more scripts on your page, the greater the performance risk. Even if the script doesn't block the page from rendering, excessive and unoptimized JavaScript can hurt interactivity metrics like Interaction to Next Paint and Total Blocking Time.

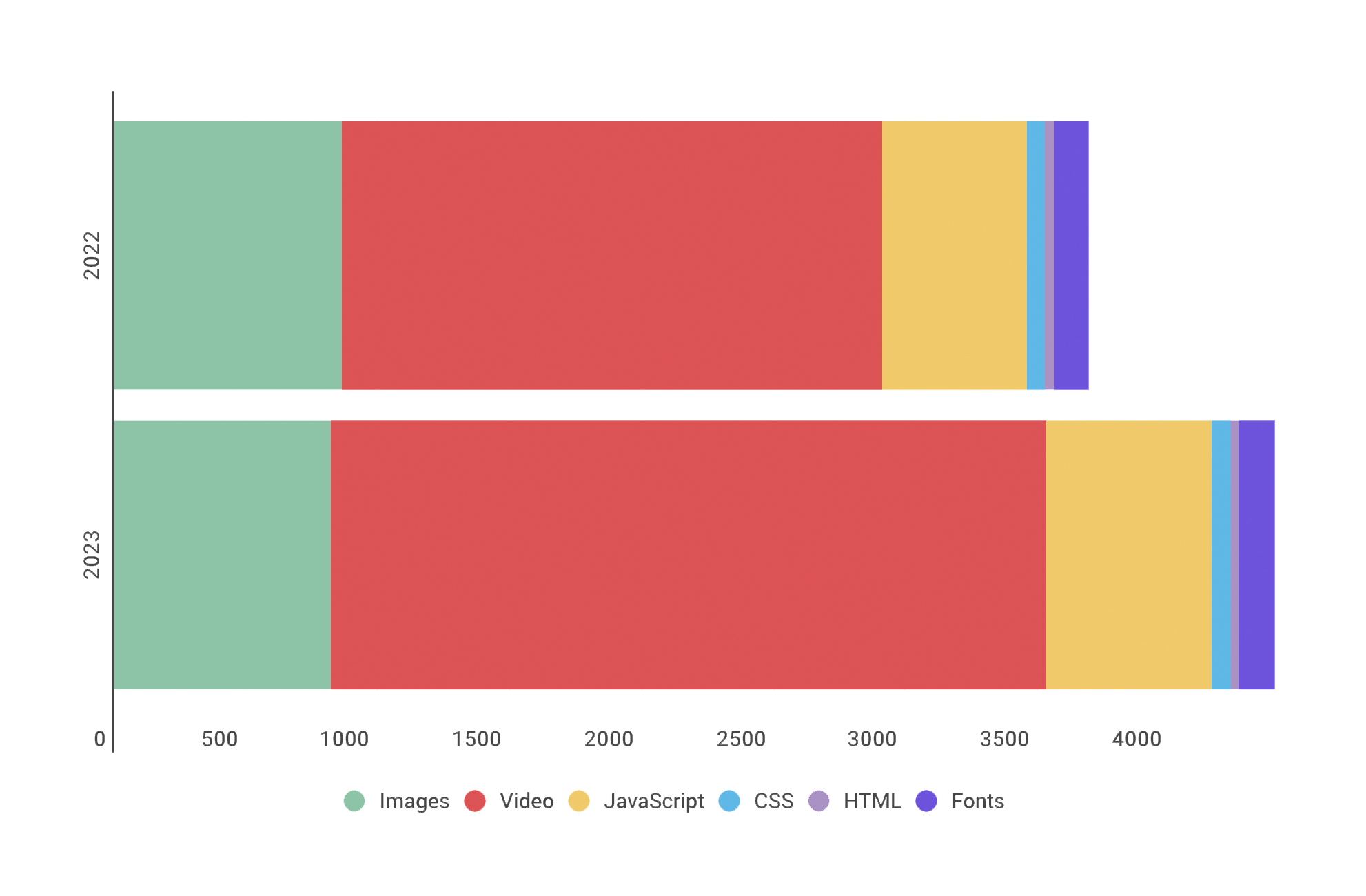

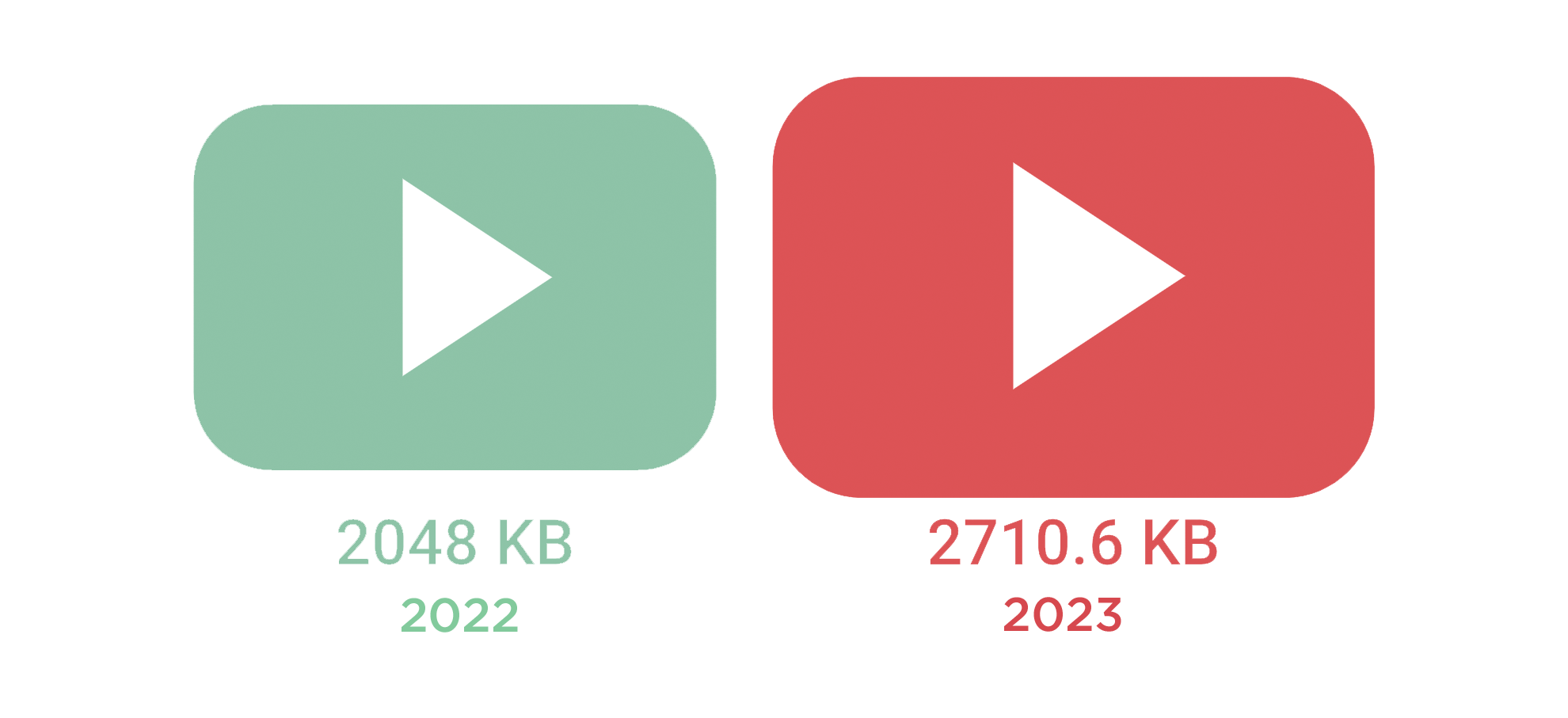

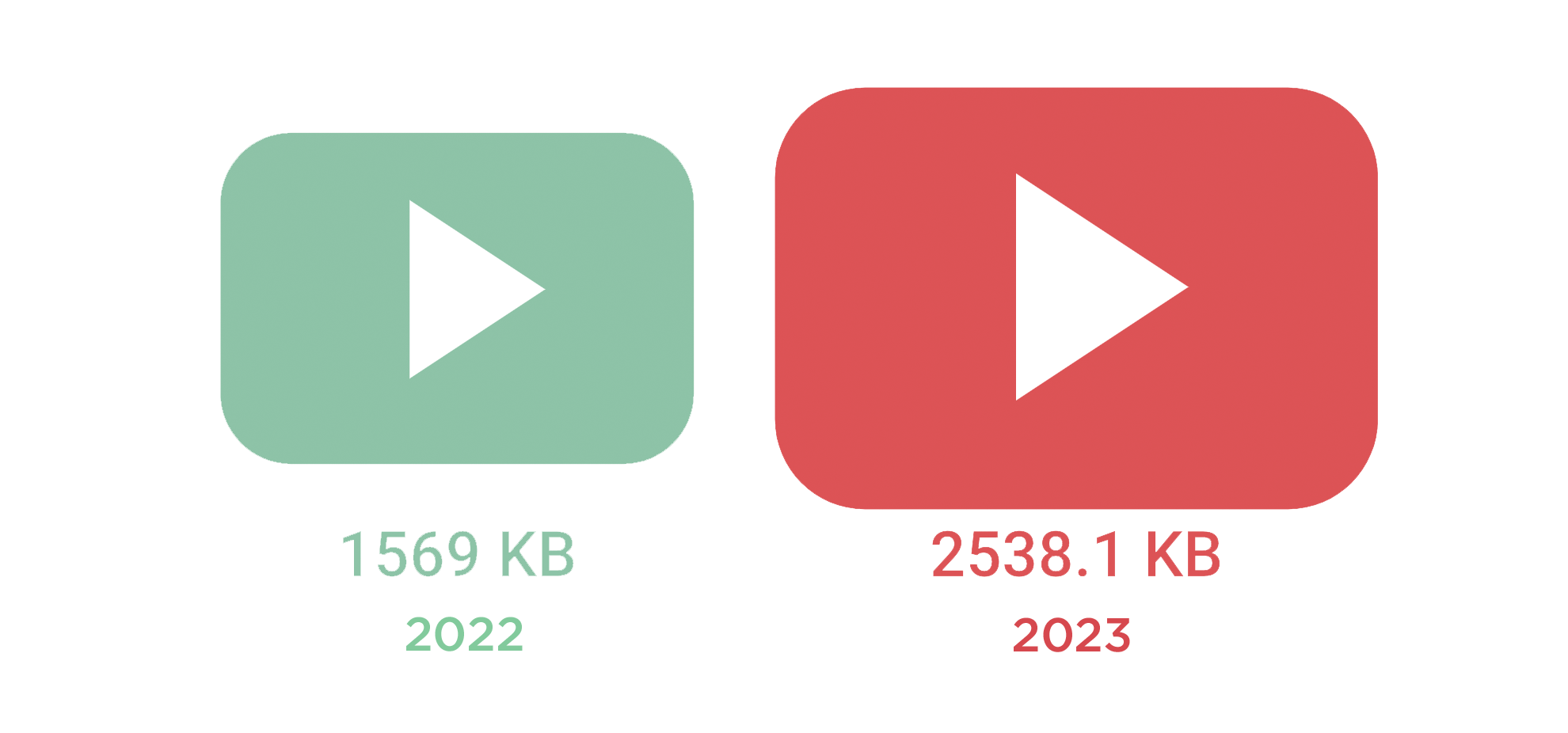

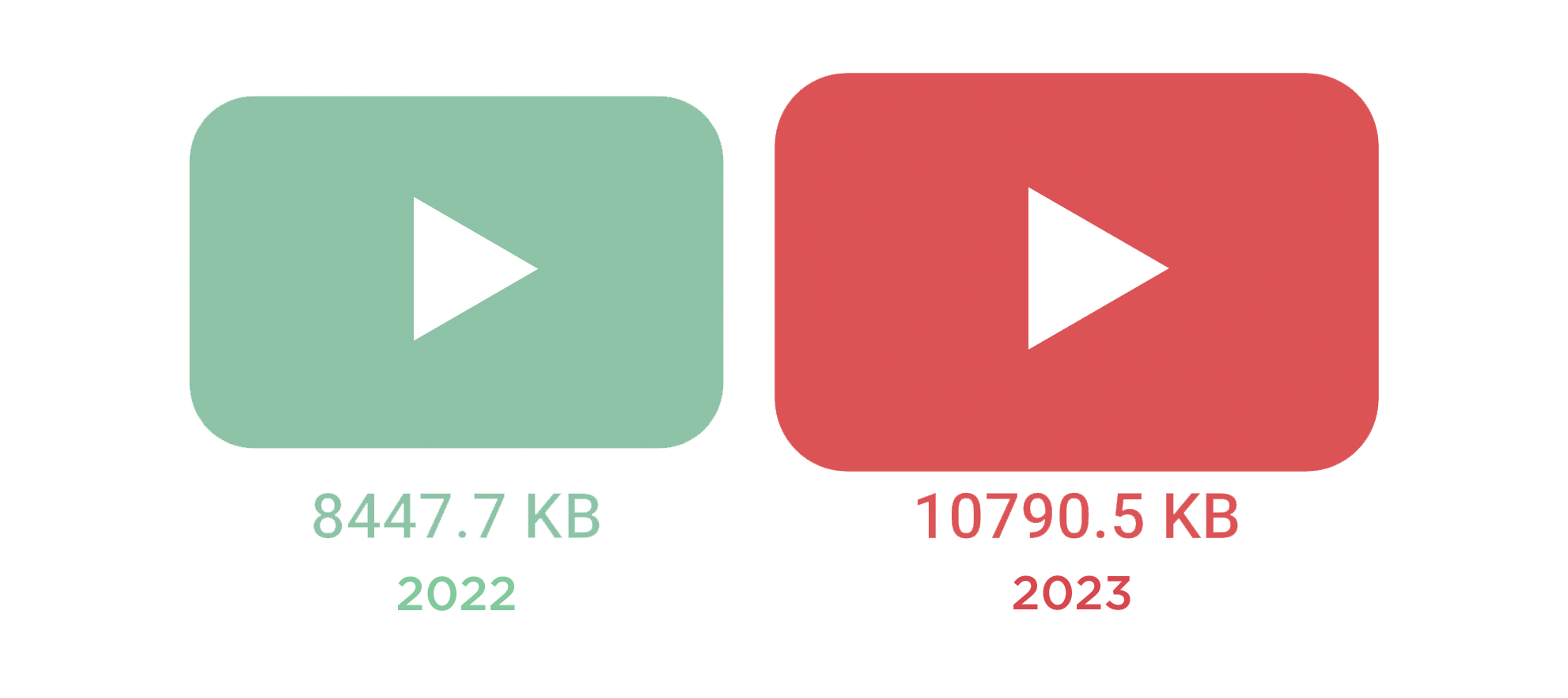

Desktop: Median video weight increased by 32%

In other words, the amount of video being served has increased by almost one-third. That's huge.

As mentioned earlier in this post, Largest Contentful Paint (one of Google's Core Web Vitals) measures when the largest visual element (image or video) in the viewport *finishes* rendering. Heavier visual elements can take much longer to render, especially videos. If you're serving huge videos to your users, it could be hurting your LCP times, which could then be hurting your Google search ranking.

(If you're adding up the numbers and wondering how the video weight makes sense given the overall page weight, it's important to keep in mind that these medians are calculated based on pages that contain these resources. Of the million URLs tracked by the HTTP Archive, not all contain video.)

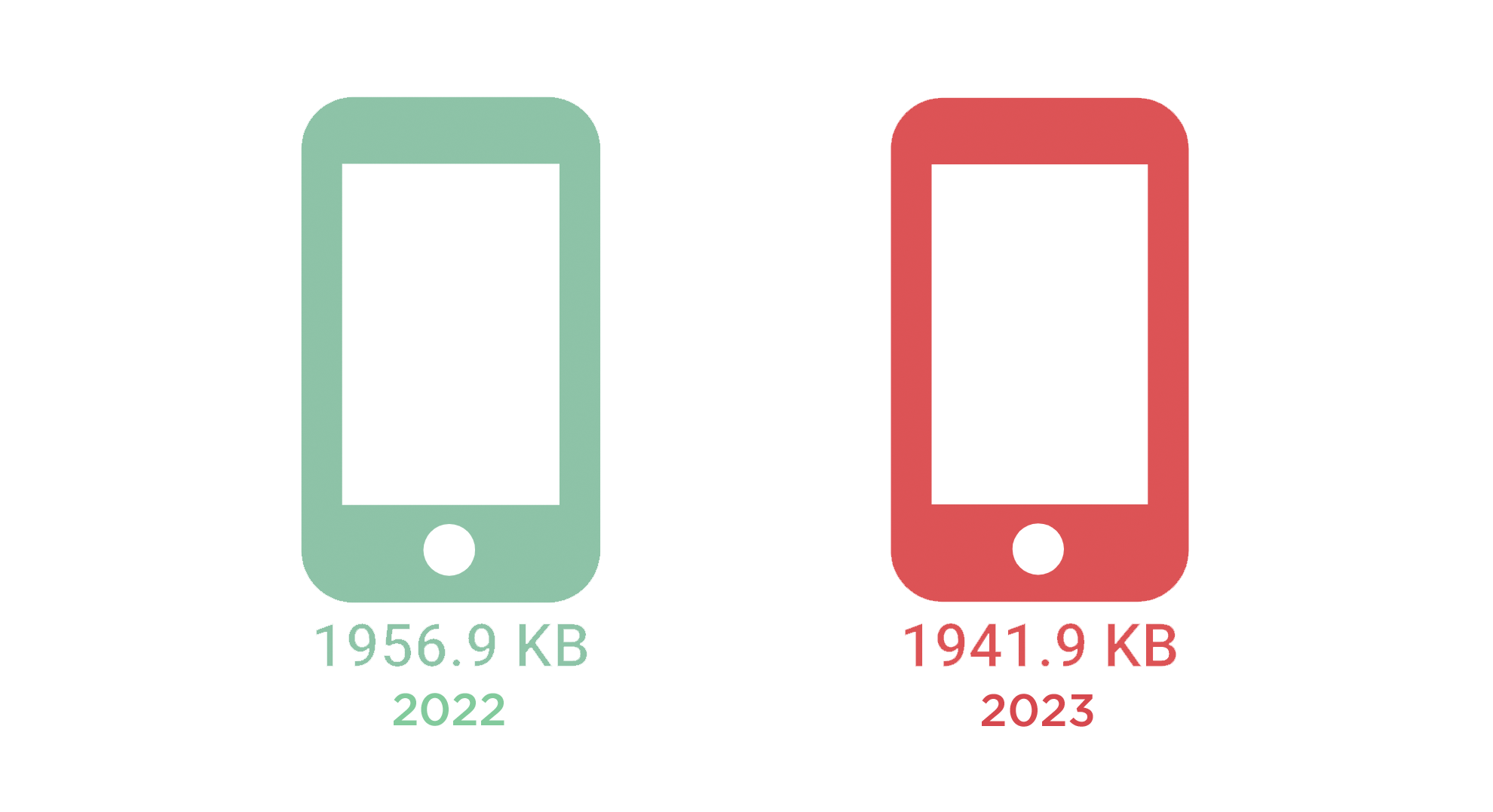

Mobile: The median page is smaller (but there's a catch)

It's genuinely heartening to see that, overall, pages served to mobile haven't gotten bigger.

However, when we look at the breakdown across resource types, we can see growth in video and JavaScript, as we did with desktop:

More on this below...

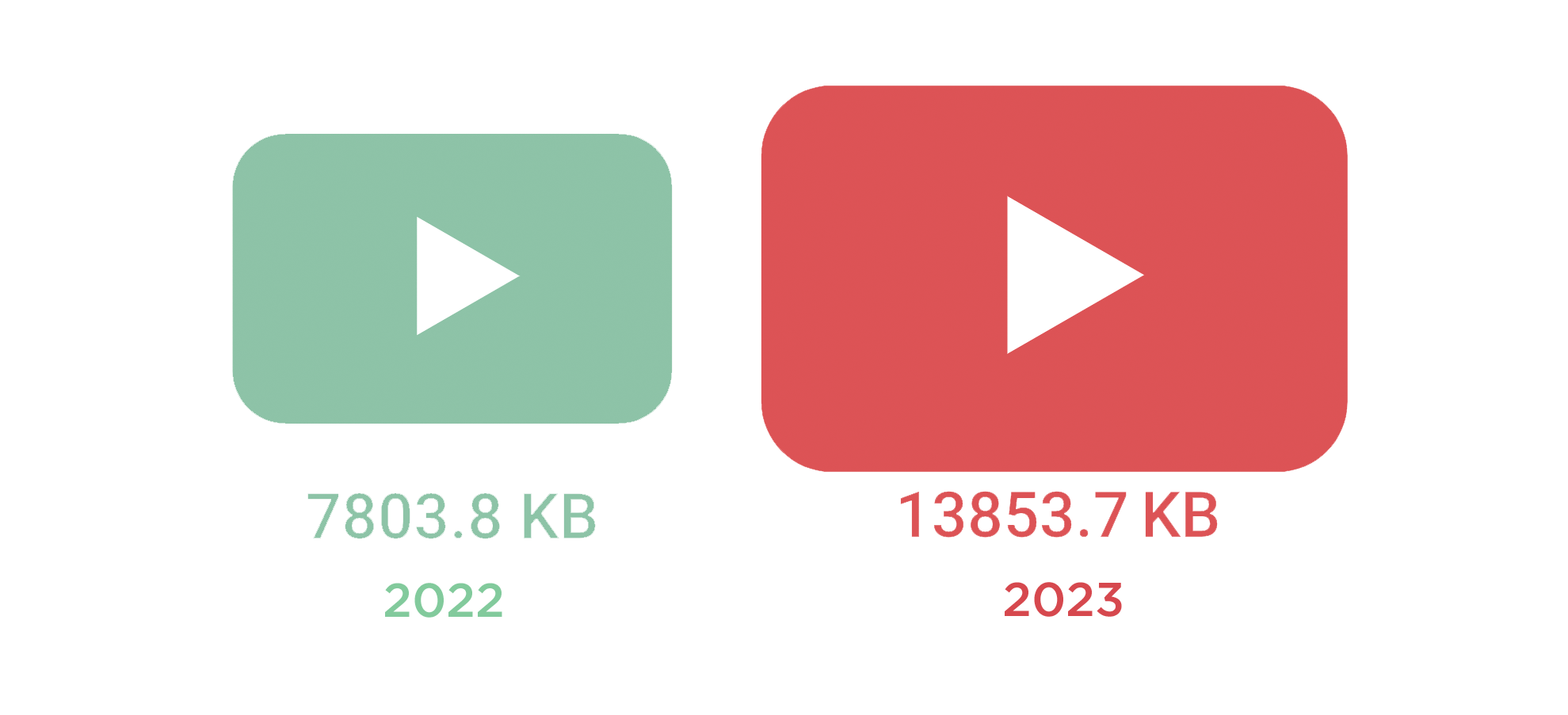

Mobile: Median video weight increased by 62%

It's hard to decide which is more concerning: the fact that video weight has increased so dramatically in just one year, or the fact that the median page that serves video to mobile devices now serves more than 2.5 MB of video.

It's easy to forget that not everyone has unlimited data. If you're serving unasked-for video to your users, you could be eating up their data plans.

It's also important to keep in mind that Google's recommended 2.5s threshold for "good" LCP applies to both mobile and desktop experiences – it doesn't differentiate. If your videos are the hero element on your pages, this could be causing your LCP times for mobile to be dangerously slower than what Google demands.

(If you're skimming this post and missed the earlier note about video weight, I'll mention again that these medians are calculated based on pages that contain these resources. Of the million URLs tracked by the HTTP Archive, not all contain video. That's why the numbers for median total size don't "match" the median video weight.)

Mobile: Median JavaScript weight increased by 11%

Almost 580 KB of JavaScript – spread across the 24 JS requests contained on the median page – is a lot of JavaScript. Given that mobile devices tend to have slower CPUs than desktop, parsing all that JavaScript comes with a performance cost.

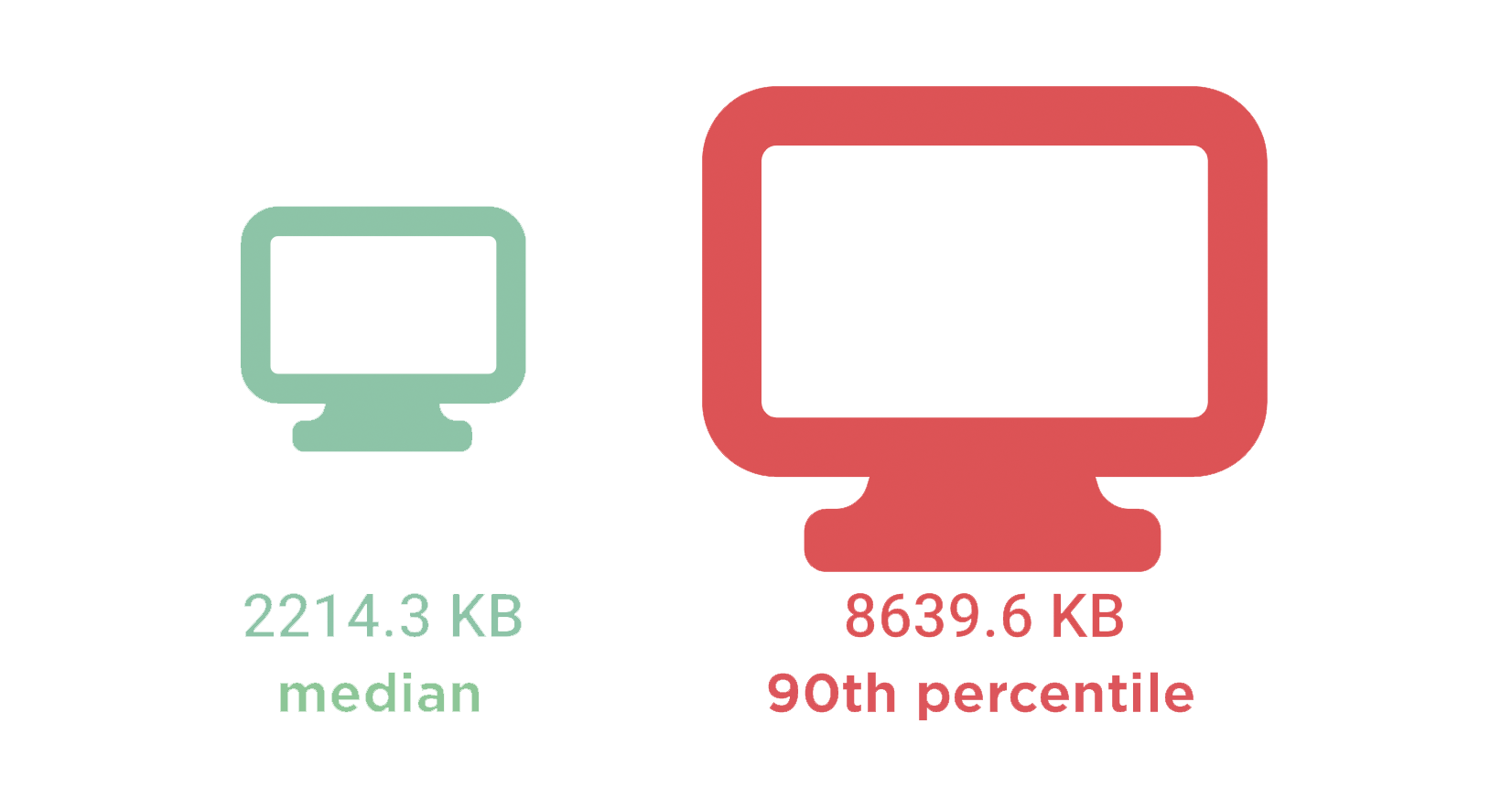

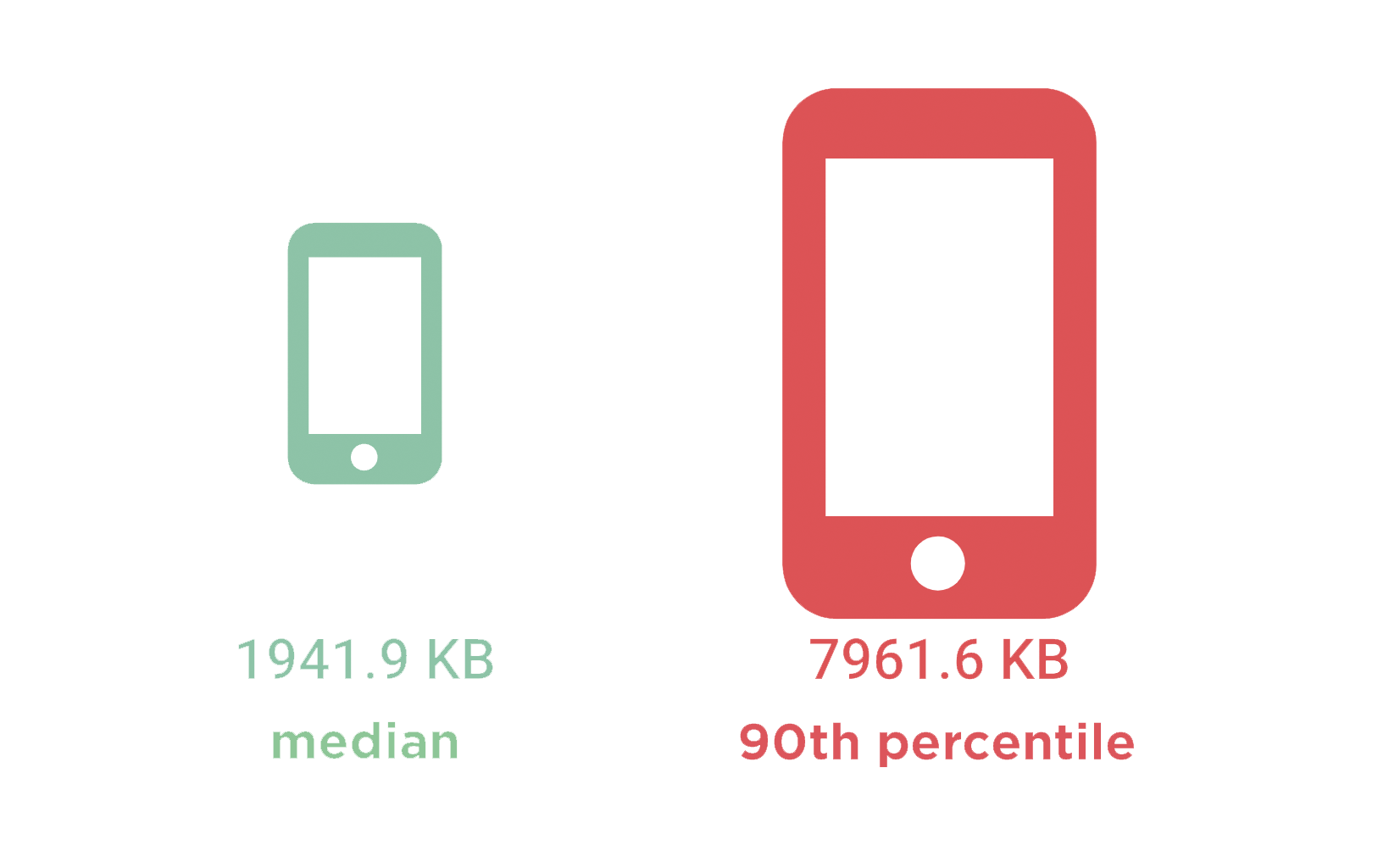

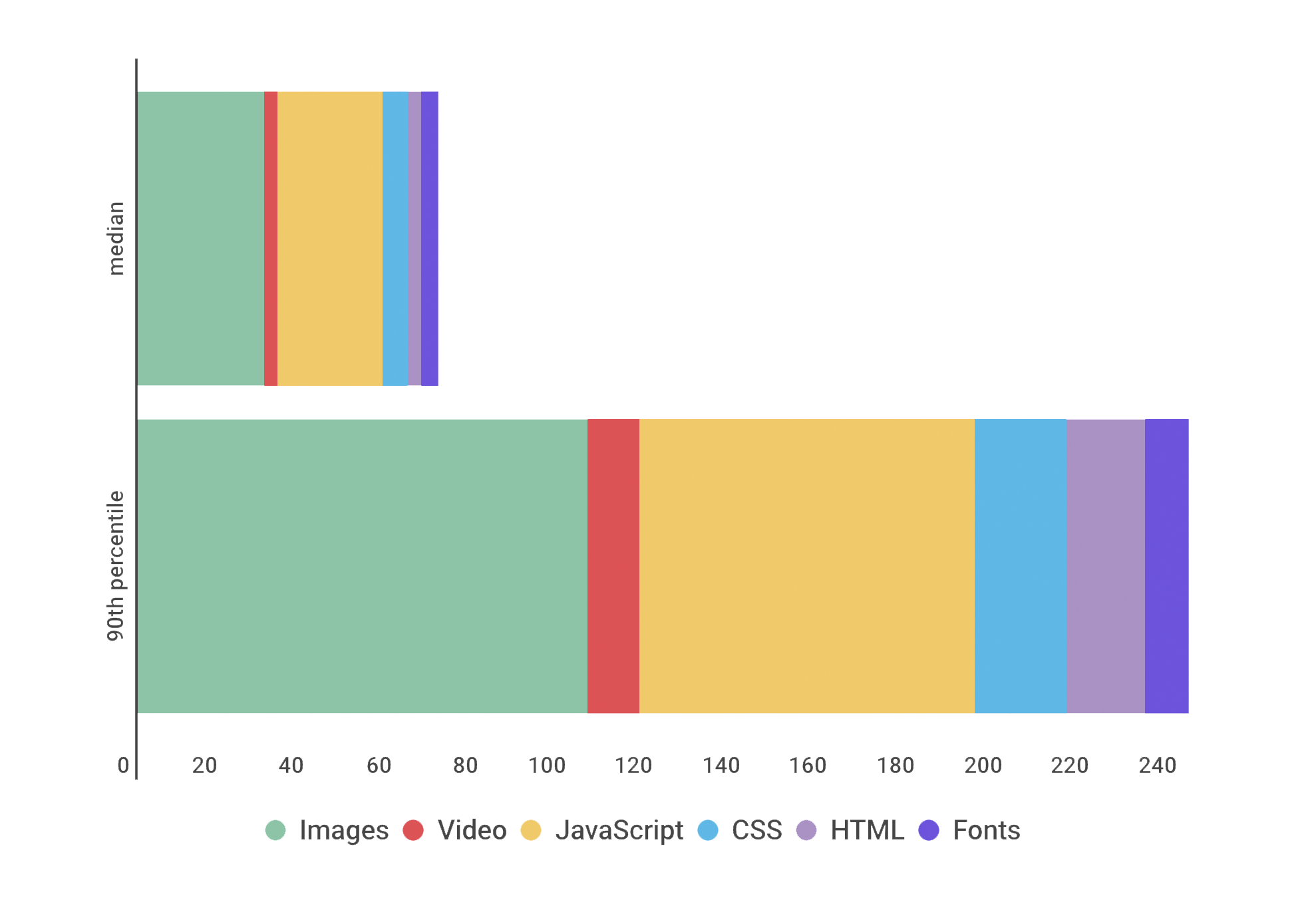

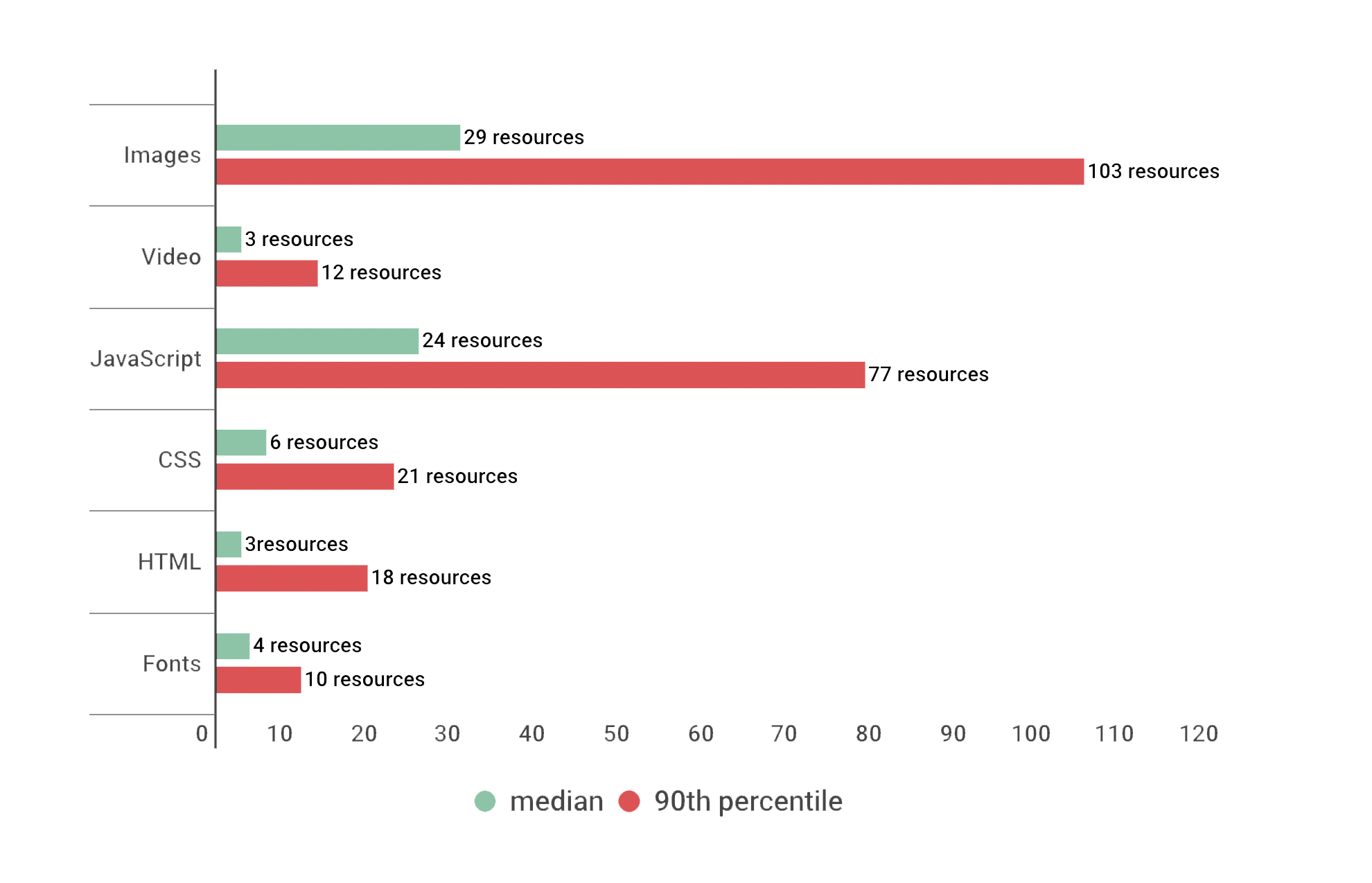

Median vs 90th percentile: Total size and resources

Things get really interesting when we compare numbers at the median versus 90th percentile. As said earlier, this is to try to understand how a "typical" page might perform, as well as pages in the "longtail". Why is it important to understand longtail performance? Think of it this way: if 10% of your visitors are suffering, that's a lot of visitors!

90p page is almost 4X larger than median page

The 90th percentile page served to desktop is close to 9MB, making it almost four times larger than the median page.

The 90th percentile page served to mobile is similarly huge, coming in at almost 8 MB.

The 90th percentile page served to mobile is similarly huge, coming in at almost 8 MB.

90p page contains almost 3X the number of requests

90p page contains almost 3X the number of requests

The median page served to desktop contained a total of 82 resources (HTML, JS, images, videos, etc.) compared to the 90th percentile with a total of 233 resources. In other words, the 90p page contains almost three times the number of requests.

You can see the breakdown of requests across different resource types:

Not surprisingly, images and JS account for the majority of requests. I was surprised to see how many requests come from CSS and HTML. And I was very surprised to see that the 90p page serves a total of 12 videos!

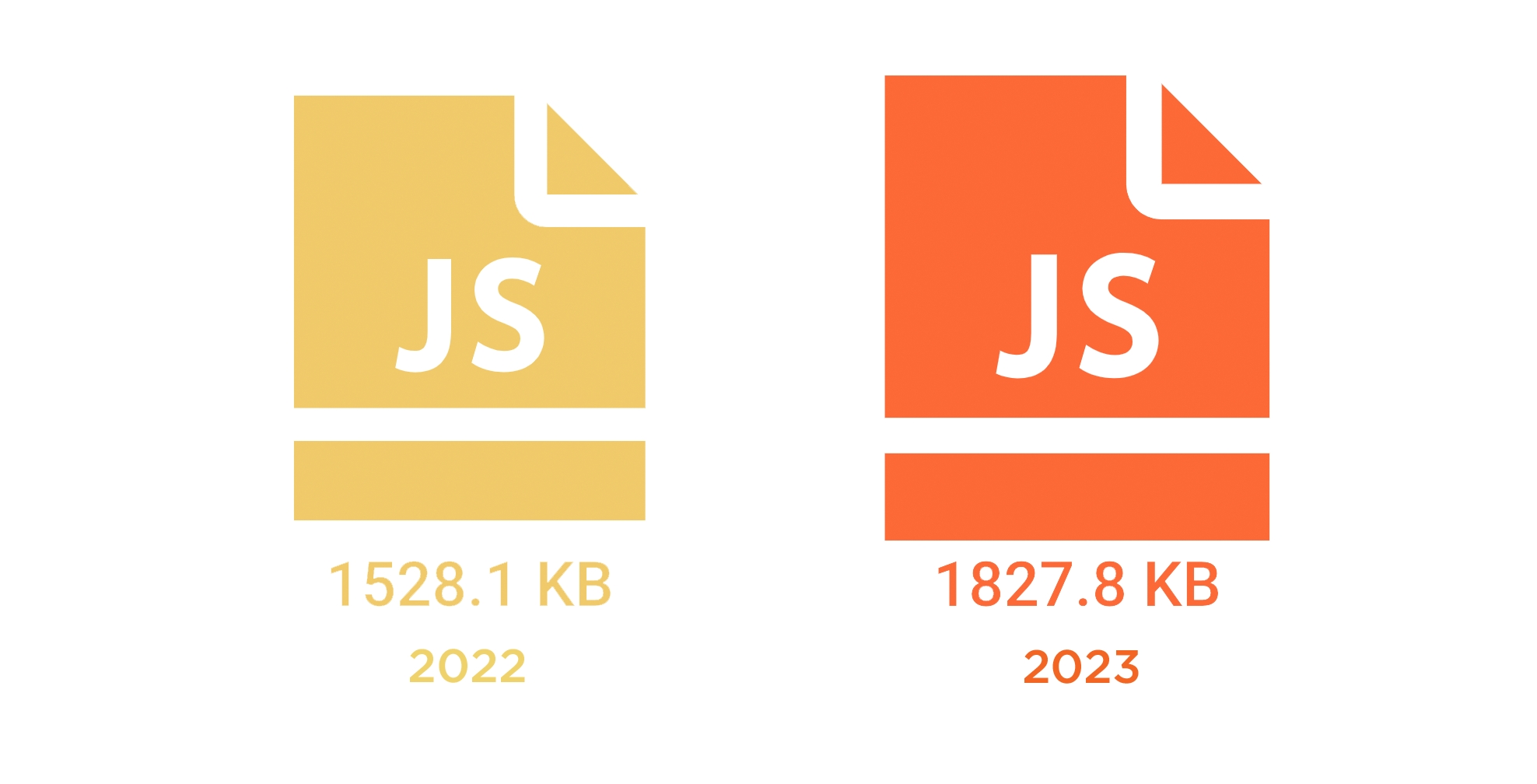

Desktop: 90p JavaScript weight increased by almost 20%

20% growth in a single resource is alarming on its own. Also alarming is the fact that pages with almost 2 MB of JavaScript – across 77 scripts –are being served to users. It bears repeating: excessive JS can hurt all your user-oriented performance metrics. If the scripts are blocking the main thread, then they're affecting the page looks to your users. And if those scripts are slow to respond to user interaction, then they're also affecting how the page feels to your users.

Mobile: 90p JavaScript weight increased by 17%

As stated above, this growth is concerning, alongside the fact that such a massive amount of CPU-intensive JavaScript is being served to mobile devices. Phone processors have gotten better, but they're still no match for a desktop. Next time you're visiting a site and your phone starts to heat up in your hand, remember this chart.

Desktop: 90p video weight increased by almost 28%

Mobile: 90p video weight increased by 77%

Yes, mobile video weight at the 90th percentile is actually greater than desktop video weight – not completely surprising when you consider that 12 or more video resources are being served to mobile devices.

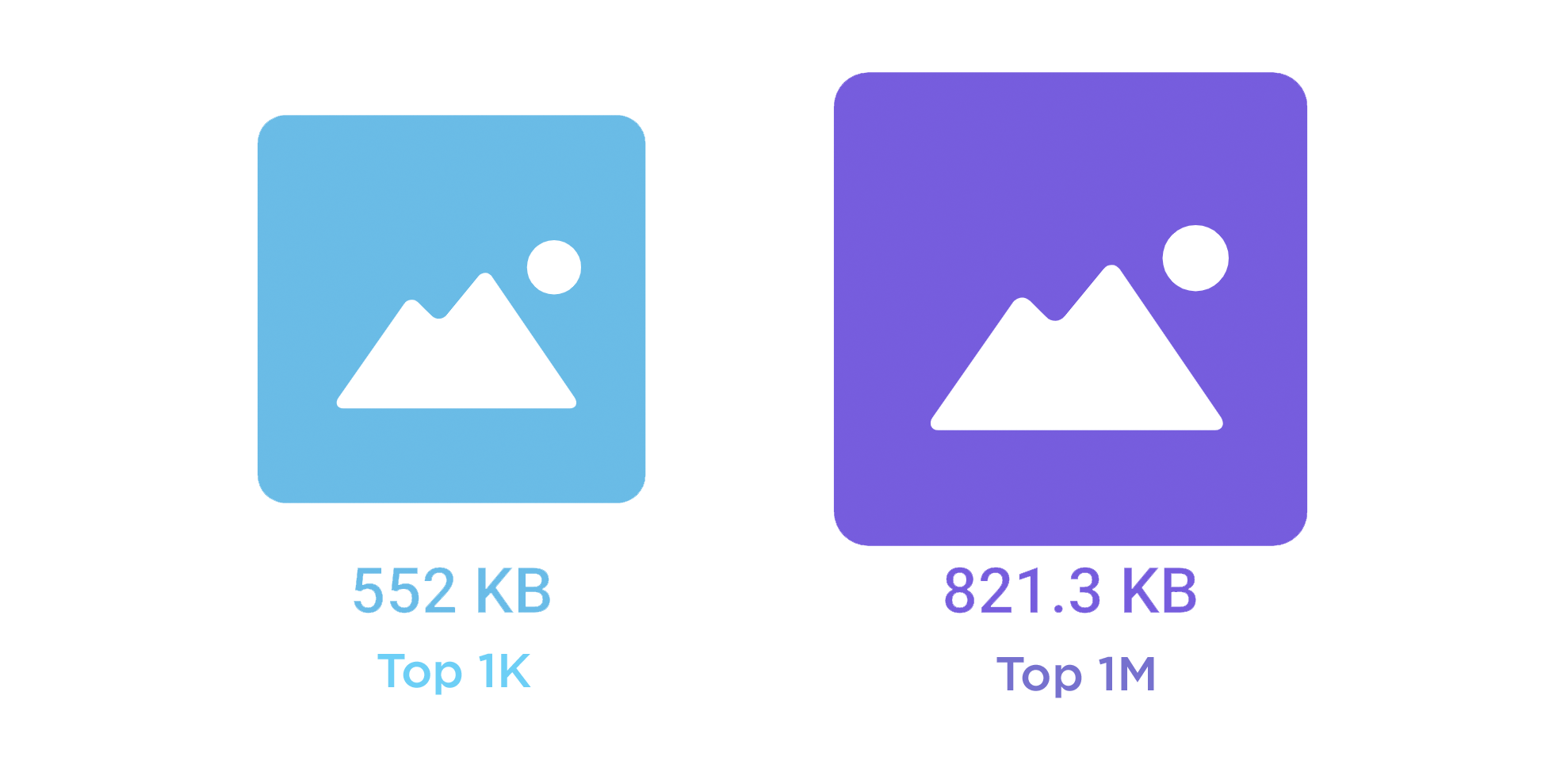

Top 1K vs Top 1M

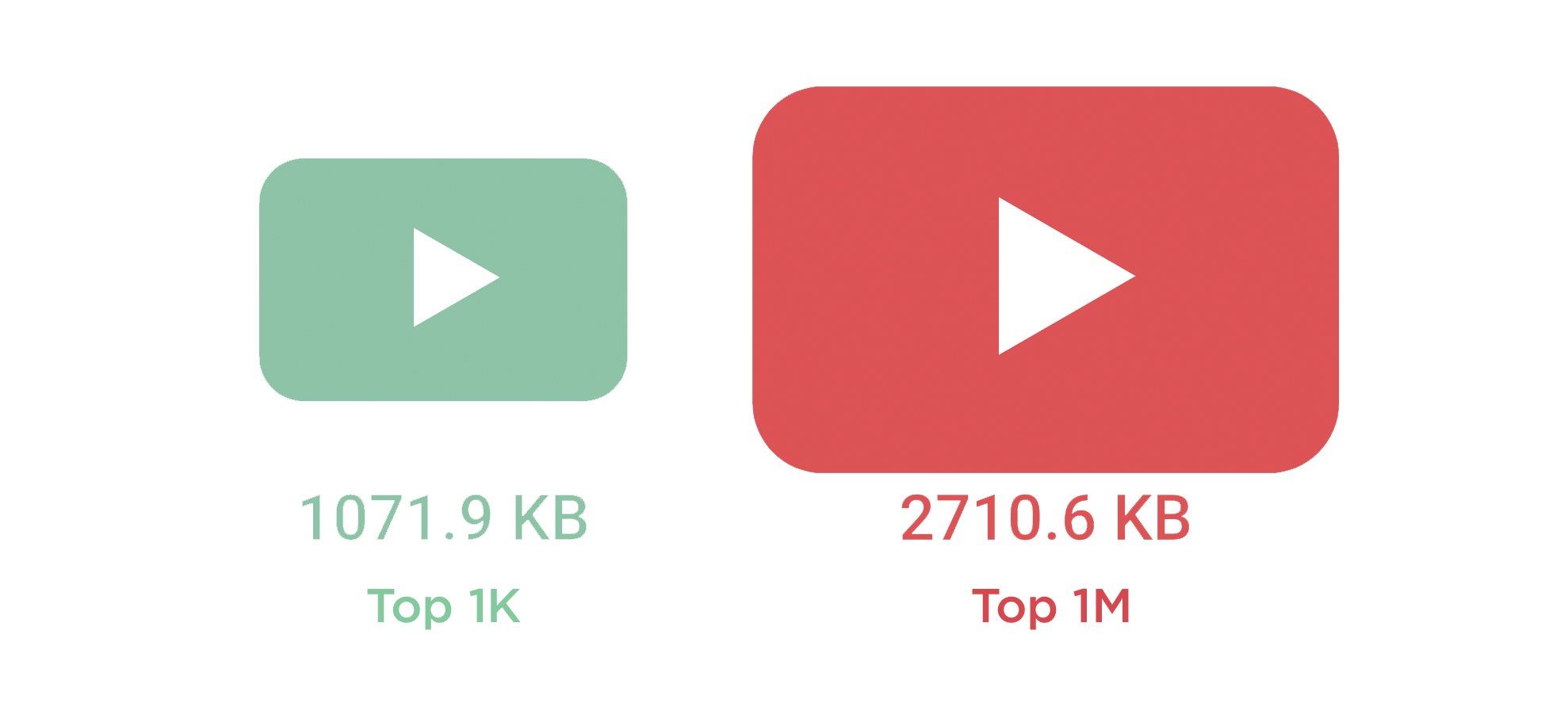

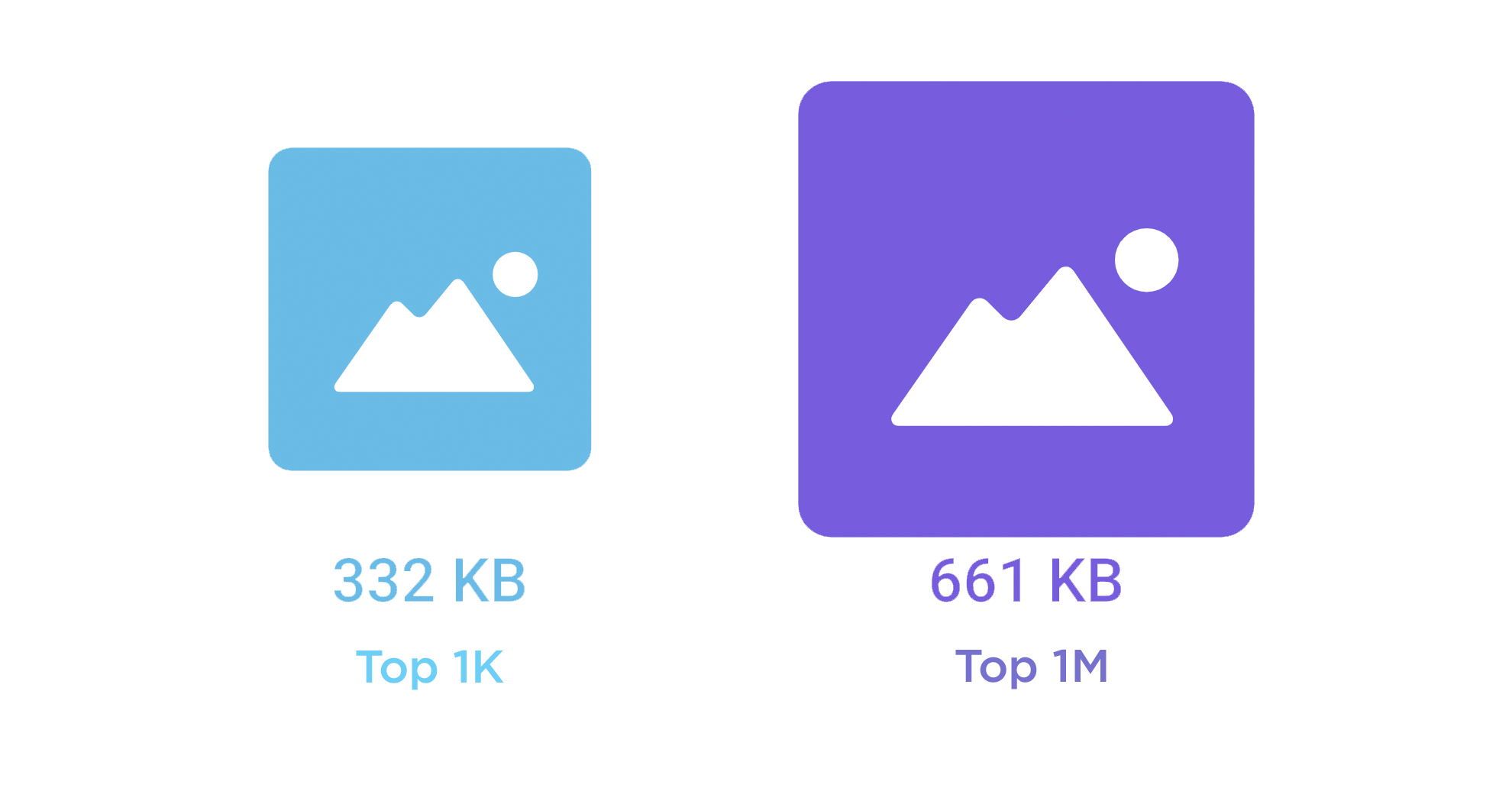

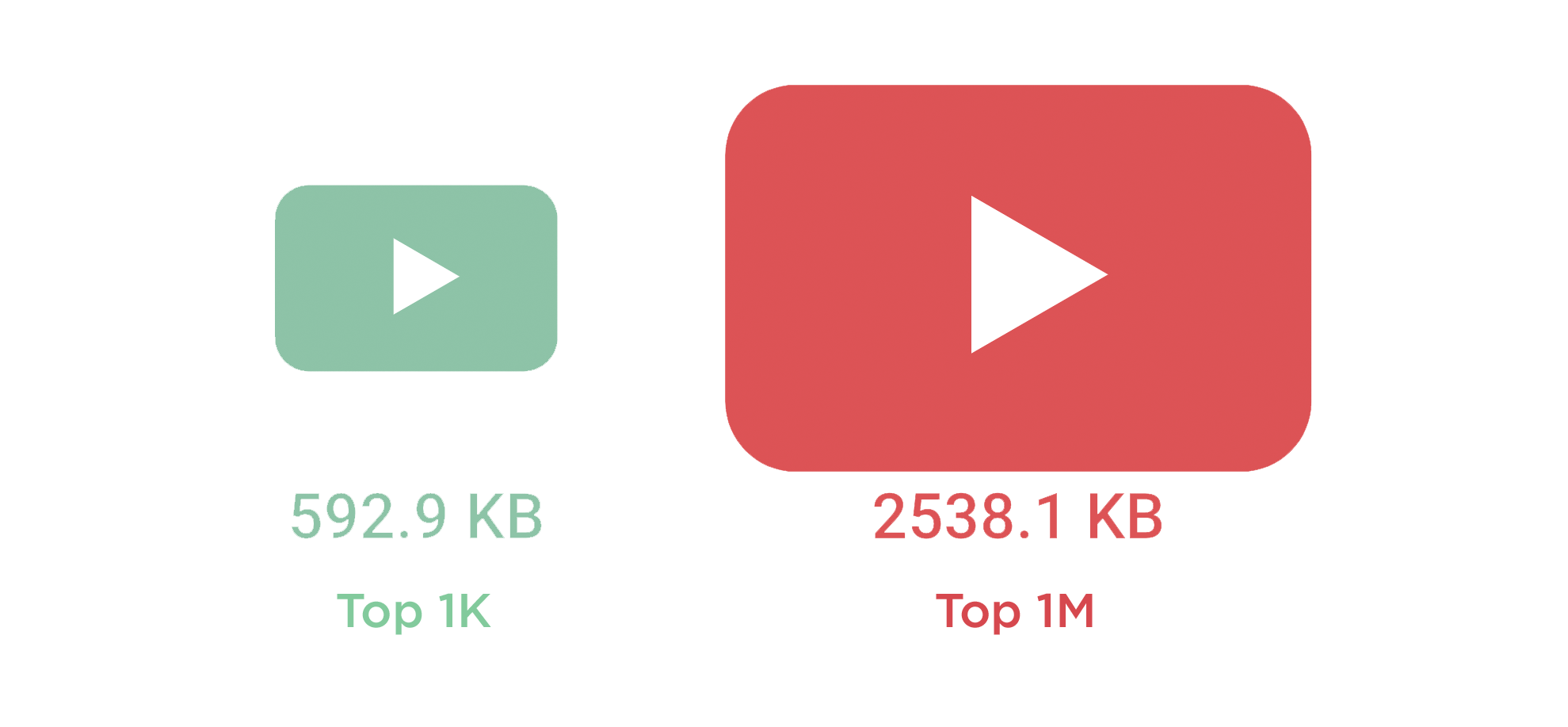

The top thousand pages tend to belong to leading sites. Those businesses perhaps have more awareness of the importance of performance optimization, along with the resources to monitor and tackle performance issues. This might be why the top 1K pages – across desktop and mobile – tend to be smaller and leaner.

Desktop image weight

Desktop video weight

Mobile image weight

Mobile video weight

The important takeaway here isn't that if your site isn't in the top 1K you should just assume that poor performance comes with the territory. Instead, this is a great opportunity to audit your key pages and see what you can delete, defer, or optimize, so that you can emulate what bigger players are doing.

Is it possible to have large pages that deliver a good user experience?

Yes. While page size can be a red flag for real performance issues, if you care about user experience, you need to take a closer look at how your pages are built to see if the size and complexity of your pages actually affect how fast your site feels to your users.

It's not enough to look at crude metrics like total requests and size. You need to know:

- How many of your requests are blocking requests?

- If your page contains blocking requests, how many of them occur in the critical rendering path? That is, how many blocking requests are there before key page metrics like Start Render and Largest Contentful Paint?

- How many of your potentially problematic requests come from third parties, and how do you maintain visibility into how they're performing?

- Are the most important images on your page the first images to render? How quickly do they show up?

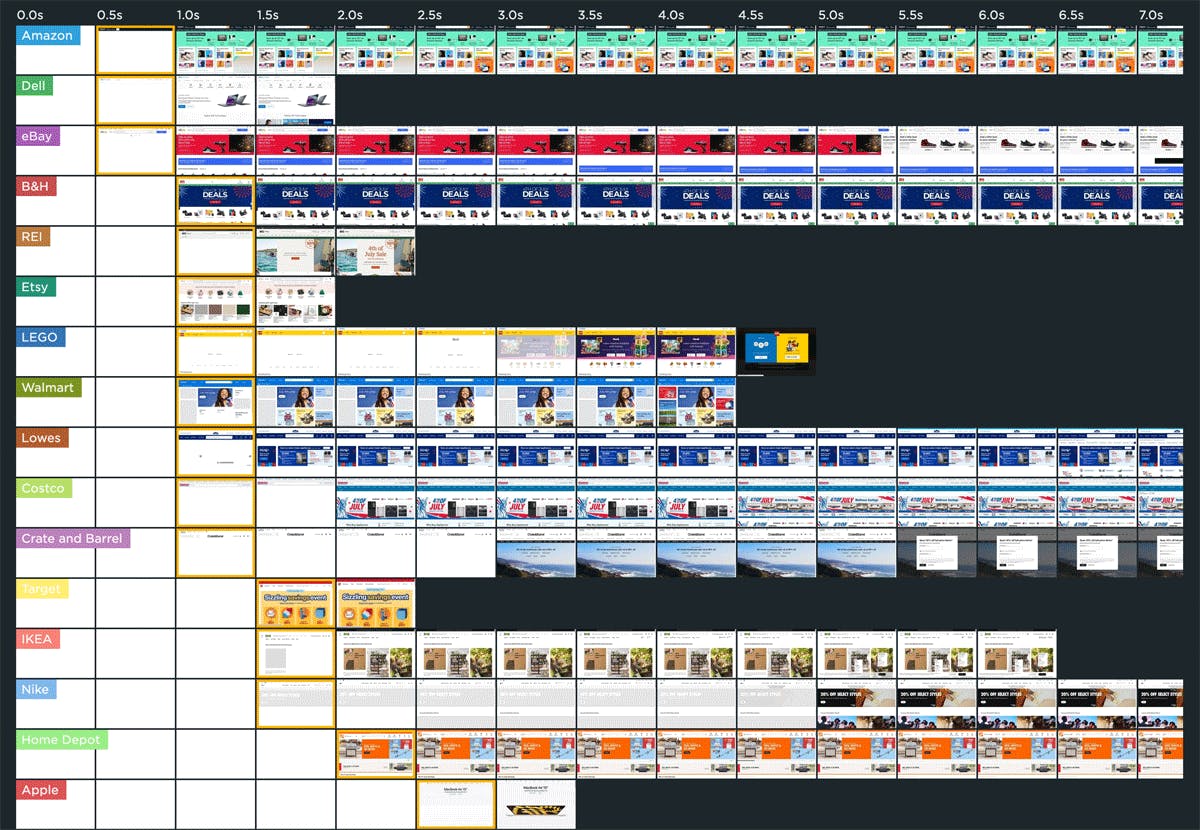

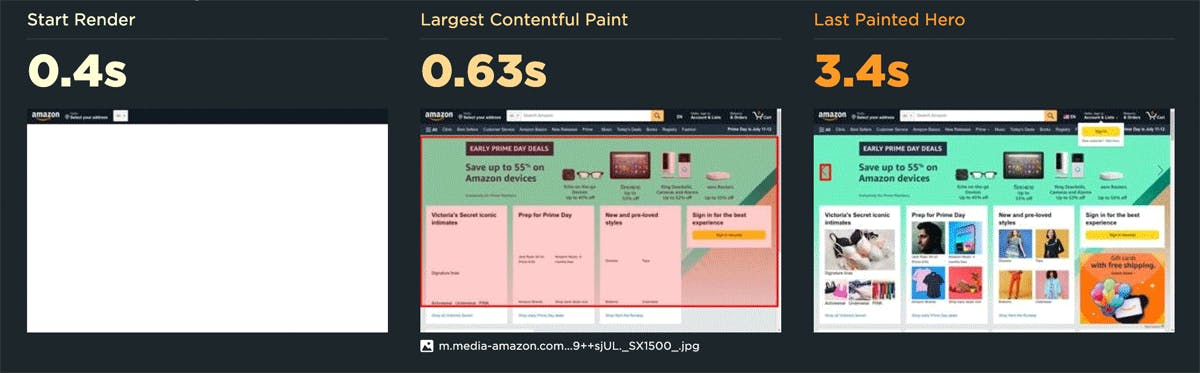

Amazon is a good example of a site that serves large, fast pages, as you can see in this recent look at our Retail Page Speed Benchmarks:

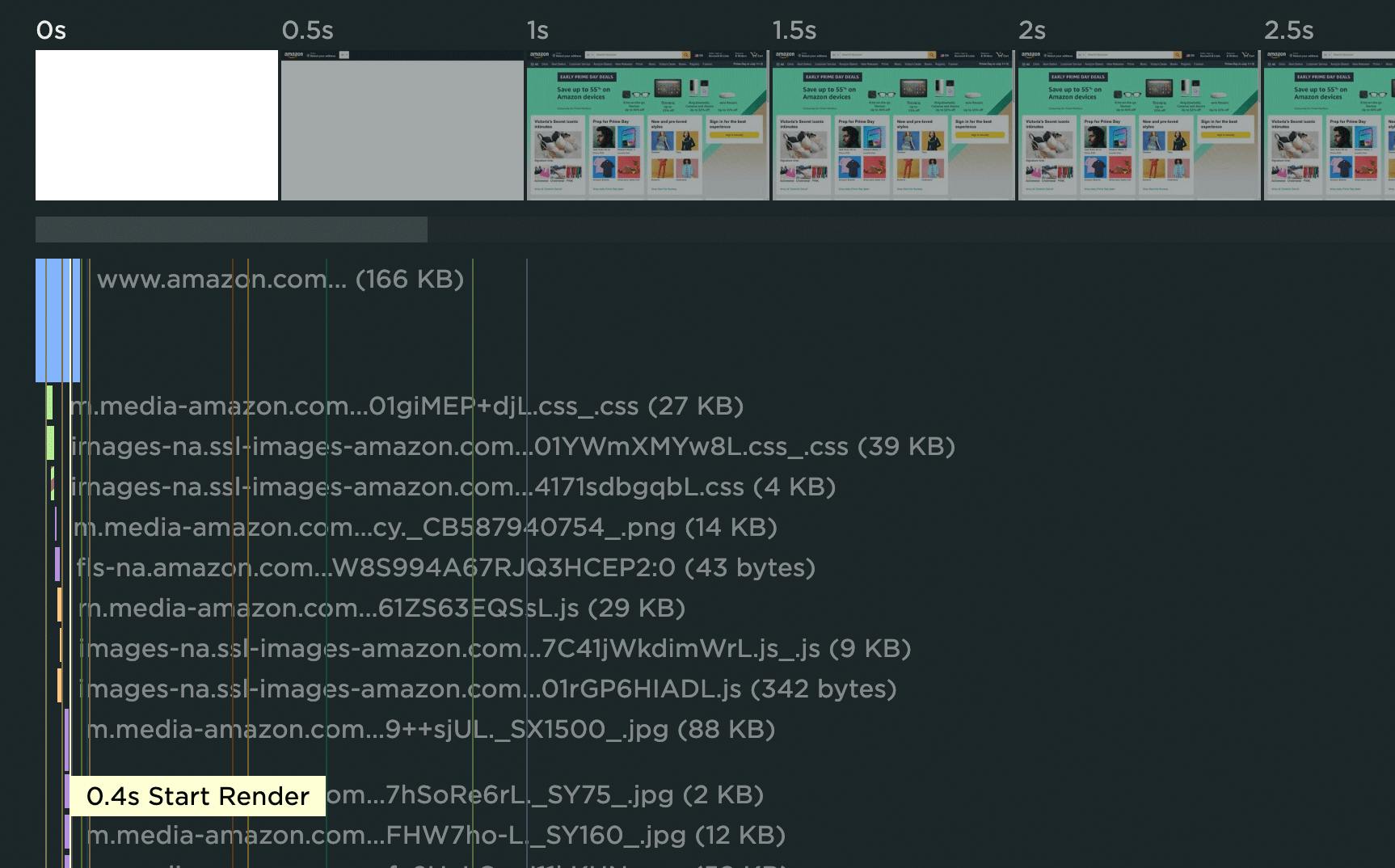

In the waterfall chart for this test run, you can see why the Amazon home page ranks fastest for Start Render. There's only a small number of resources on the critical rendering path, and they're all highly optimized.

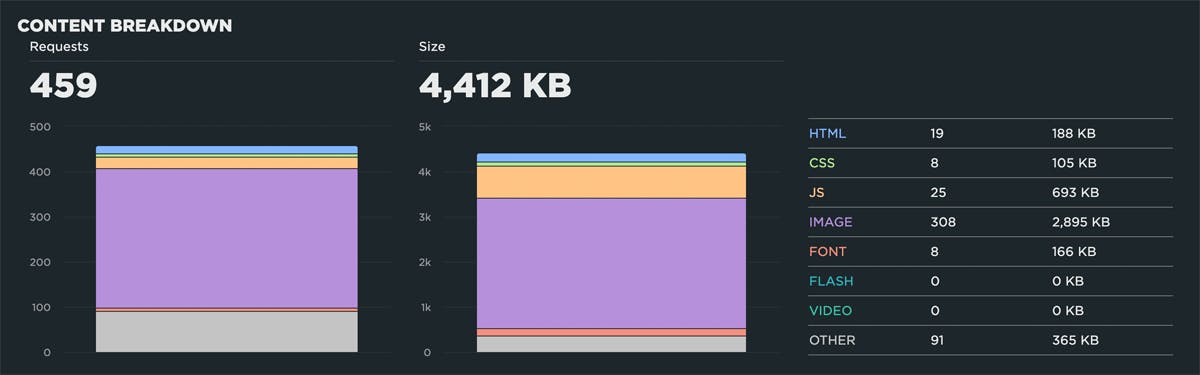

So, despite the fact that the page contains 459 requests and weighs in at 4,412 KB – far beyond the median sizes shared above...

...the page has a Start Render time of 0.4 seconds, a Largest Contentful Paint time of 0.63 seconds, and a Last Painted Hero time of 3.4 seconds.

To recap: yes, you can have pages that are large and fast, but you need to be mindful of the critical rendering path.

How much content should you be serving?

Having said all that, making your pages as small as possible is in the best interest of your users who don't have access to fast networks and devices. Alex Russell suggests these per-page content targets for 2023:

- 150 KB of HTML, CSS, images, and render-blocking font resources

- No more than 300-350 KB of JavaScript

Are these targets aggressive? Yes. Will you be able to meet them? Possibly not. But as the saying goes, don't let perfect be the enemy of good.

Takeaways

I meet with so many people who build and optimize websites. When we investigate how their pages are built, I routinely witness surprise at spotting things like ghost scripts, huge unoptimized images, and blocking resources they weren't aware of. These are smart people. The problem isn't them – it's the scale of their sites, the speed of their release cycles, and the number of people who touch each page.

We're never going to get our lean, pre-1999, under-1MB web pages back. But we can regain control over the pages we have today.

1. Understand the critical rendering path for each page

Your pages probably have a some dead weight on them, and some of that weight is unoptimized. Too much stuff means you can't see the forest for the trees. The key to a good user experience is quickly delivering the most important content first. Here are some great resources for analyzing and optimizing the critical rendering path.

2. Make sure everyone who touches a page understands the performance impact of what they do

All the fancy performance monitoring tools in the world can't help you if you don't have a strong performance culture at your organization. Here are some tips and best practices to help on that journey.

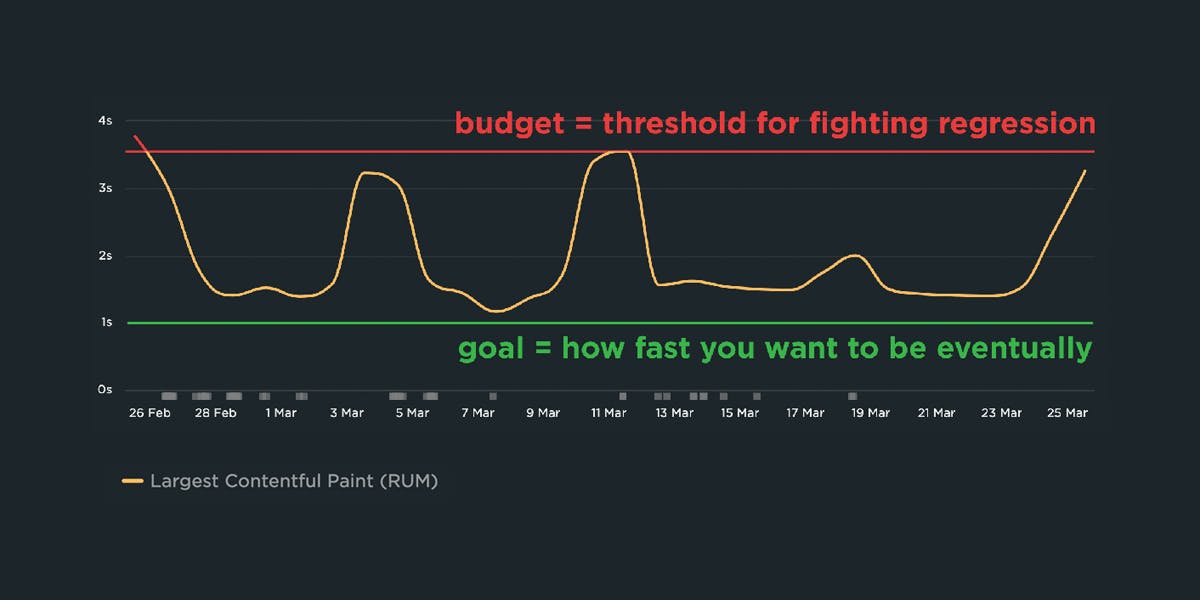

3. Use performance budgets to fight regression

Page bloat happens when people stop paying attention. Pages need to be monitored consistently over time. Integrating performance testing into your CI/CD process is a great way to fight regression, especially if you combine this with creating performance budgets. By creating performance budgets for key metrics – such as Start Render, Largest Contentful Paint, and various page size and weight metrics – you can get alerted when they go out of bounds.

4. Don't assume hardware and networks will mitigate page bloat

Increased page size and complexity is not fully mitigated by faster devices and networks, or by our hard-working browsers. Clearly we need to keep talking about it. We need to understand how ever-growing pages work against us. And we need to have strategies in place to understand and manage our pages.

5. Don't forget to monitor longtail performance

While some of your users may have newer devices and speedy networks, not all are this lucky. If you're using a real user monitoring tool, keep an eye on your performance metrics at the 75th and 95th percentiles so you have an understanding of your site's less-than-optimal performance.

Questions or feedback?

I'd love to hear your thoughts and insights. If you're interested in tracking page size and complexity for your own site, we'd love to have you try SpeedCurve for free!