Lighthouse scores now available in your test results

In the year since Google rolled out Lighthouse, it's safe to say that "Will you be adding Lighthouse scoring?" is one the most common questions we've fielded here at SpeedCurve HQ. And since Google cranked up the pressure on sites to deliver better mobile performance (or suffer the SEO consequences) earlier this month, we've been getting that question even more often.

We take a rigorous approach to adding new metrics. We think the best solution is always to give you the right data, not just more data. So we're very happy to announce that after much analysis and consideration, we've added Lighthouse scores to SpeedCurve. Here's why – as well as how you can see your scores if you're already a SpeedCurve user.

Why we added Lighthouse to SpeedCurve

Lighthouse is an open-source automated tool for auditing the quality of web pages. You can perform Lighthouse tests on any web page, and get a series of scores for performance, accessibility, SEO, and more.

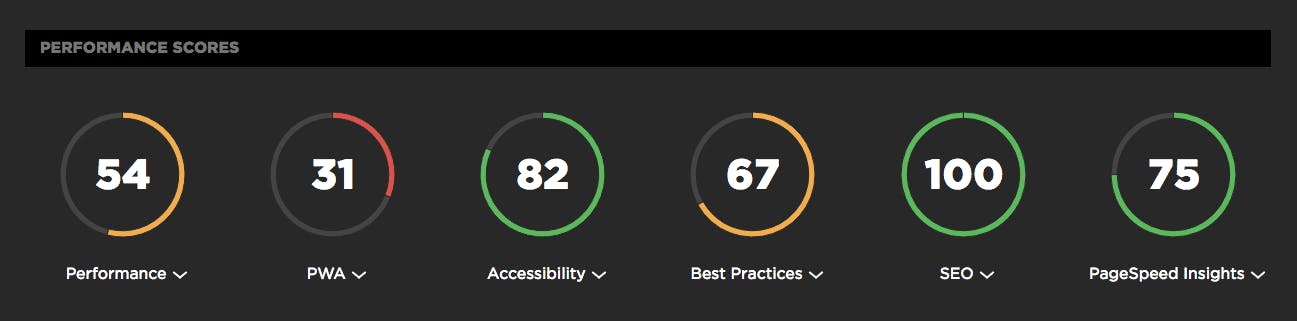

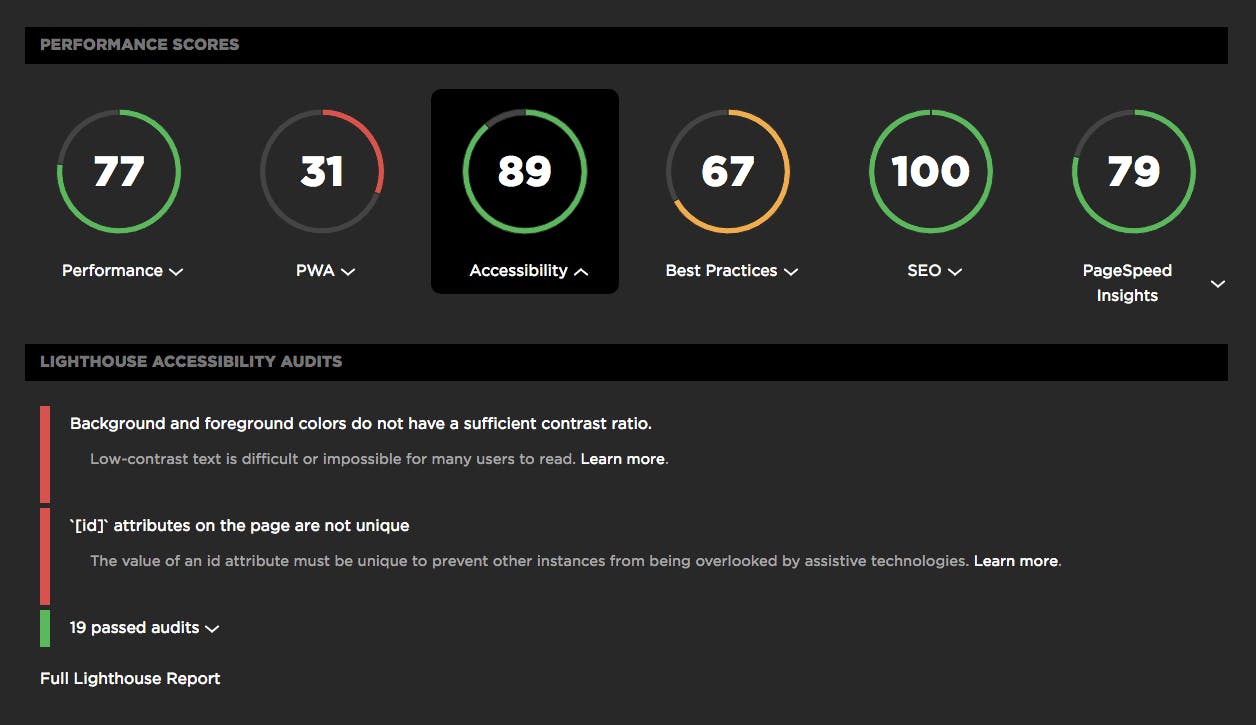

You can run Lighthouse within Chrome DevTools, from the command line, and as a Node module. But there's no need to do any of those if you're already using SpeedCurve Synthetic monitoring. Every time you run a synthetic test, your Lighthouse scores appear at the top of your test results page by default.

There were a number of compelling reasons why we made the choice to add Lighthouse to SpeedCurve:

Track metrics that correlate to UX

The best performance metrics are those that help you understand how people experience your site. One of the things we like about Lighthouse is that – like SpeedCurve – it tracks user-oriented metrics like Speed Index, First Meaningful Paint, and Time to Interactive.

See all your improvement recommendations in one place

SpeedCurve already gives you performance recommendations on your test results pages. Now you can get all your recommendations – including those for accessibility and SEO – on the same page. Because we also include your PageSpeed score, SpeedCurve is the only place where you can get your Lighthouse audits and your PageSpeed Insights under one roof.

Improve SEO, especially for mobile

Several of our customers have told us that their SEO teams are very interested in using Lighthouse to help them feel their way forward now that Google has announced that page speed is a ranking factor for mobile search.

Monitor unicorn metric for CEOs and executives

When Google talks, executives listen. Many of our customers have told us that their CEO or other C-level folks don't really care about individual metrics. They want a single aggregated score – a unicorn metric – that's easy to digest and to track over time.

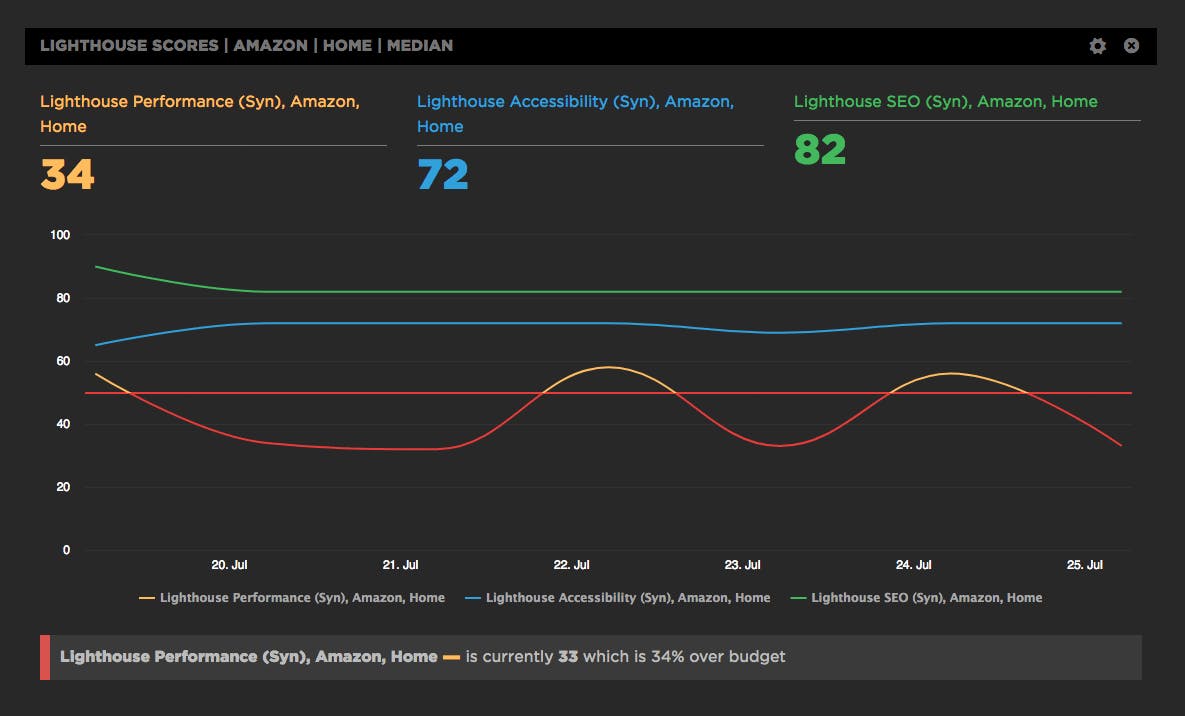

Get alerts when your Lighthouse scores "fail"

One of the great things about running your Lighthouse tests within SpeedCurve is that you can use your Favorites dashboard to create custom charts that let you track each Lighthouse metric. You can also create performance budgets for the metrics you (or your executive team) care about most and get alerts when that budget goes out of bounds.

For example, the custom chart below tracks three Lighthouse scores – performance, accessibility, and SEO – for the Amazon.com home page. I've also created a (very modest) performance budget of 50 out of 100 for the Lighthouse Performance score. That budget is tracked in the same chart, and you can see that the budget has gone out of bounds a couple of times in the week since we activated Lighthouse. You can also see, interestingly, that this score has much more variability than the other scores. This would merit some deeper digging.

Get started

If you're already a SpeedCurve user, you can drill down into your individual test results and find your Lighthouse scores at the top of the page. We activated Lighthouse about a week ago, so you'll find a week's worth of test data to explore.

If you're not a SpeedCurve user, you can sign up for a free trial and check out your Lighthouse scores and the dozens of other performance and UX metrics we track for you.

As always, we love your feedback!

I'd especially love to hear what your experience has been with Lighthouse. Are you using it to help with something not covered in this post? Have you learned something with Lighthouse that might otherwise have eluded you? Let me know in the comments!