Six web performance resolutions for the new year

For the past two years, the performance.now() conference has been the most valuable performance event I've attended. So valuable, in fact, that I've made some of the talks the cornerstone of this list of performance resolutions for 2020. I'd love to know how many – if any – of these are on your list. As always, I'd love people's feedback!

1. Connect the impact of web performance to your business

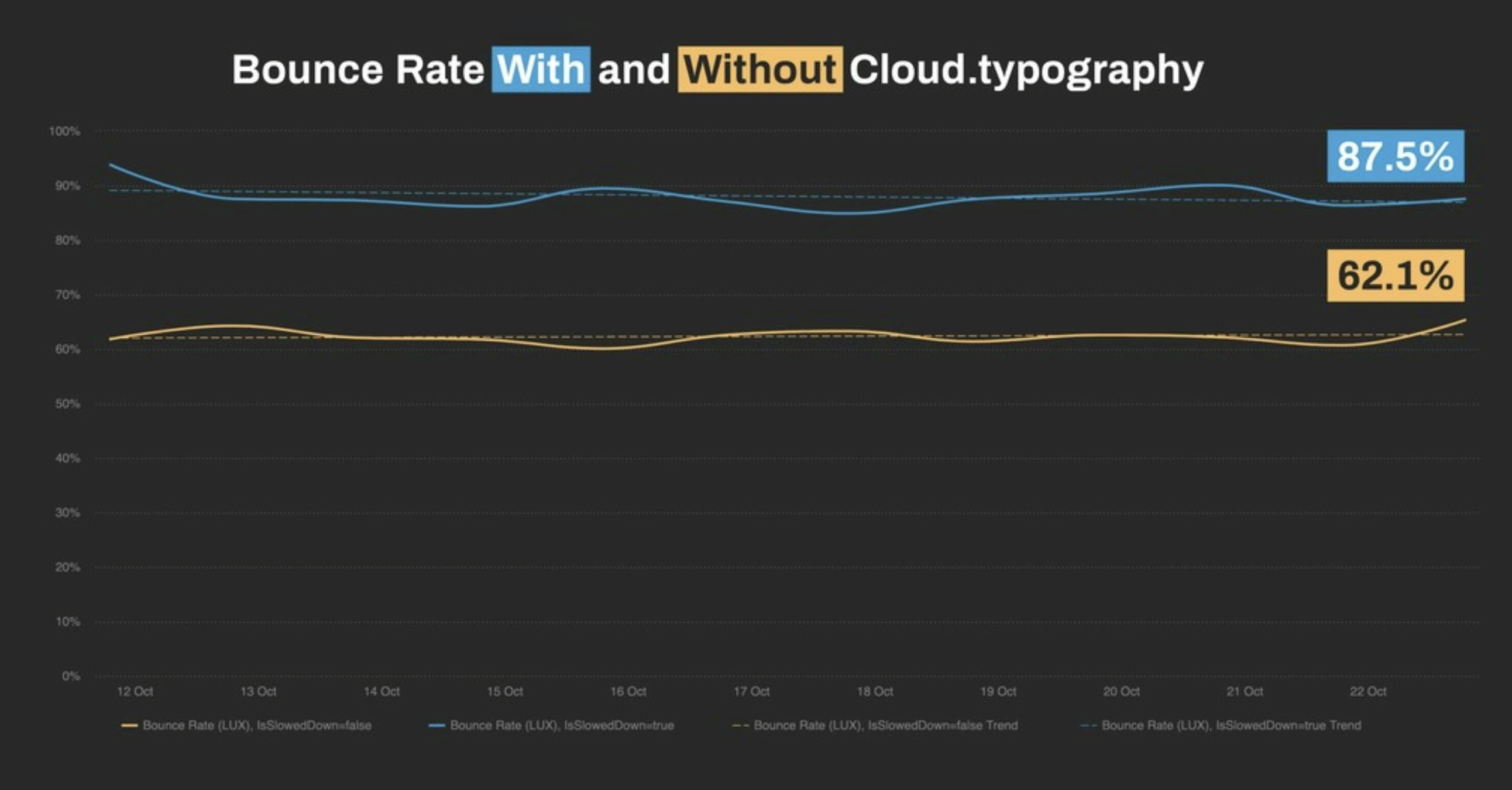

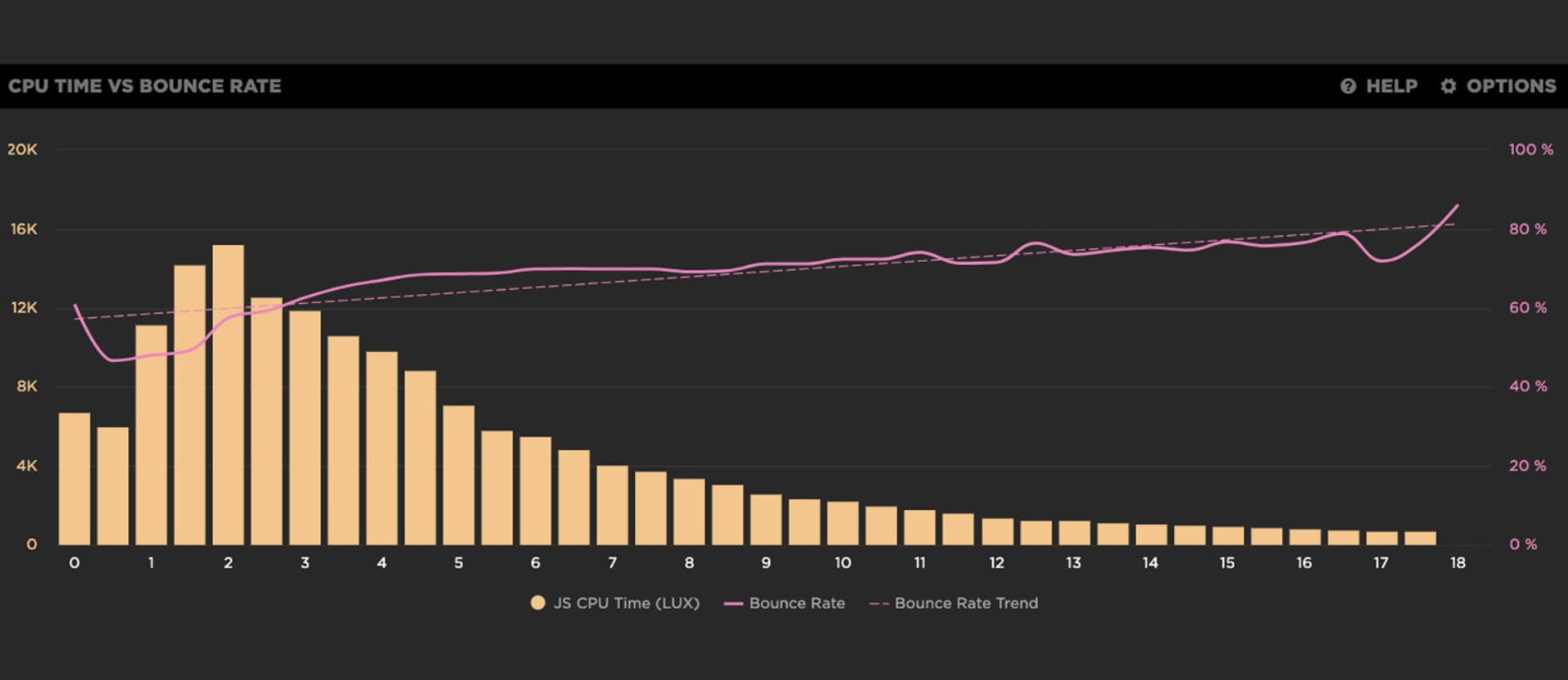

Harry Roberts did an eye-opening talk called From Milliseconds to Millions, in which he shared practical wisdom gleaned from his experience as a performance consultant for some of the biggest sites in the world. There's a ton of useful material and inspiring case studies in here, including some really great examples of using correlation charts to see the relationship between performance and conversions.

Harry also shared how to use real user monitoring as part of an A/B test to measure the impact of a single third-party script on bounce rate:

To run your own A/B tests and create your own correlation charts in LUX, our RUM tool, here are some resources you'll find helpful:

2. Help other people in your organization care about performance

In my role here at SpeedCurve, I've talked with hundreds of our customers about how they do performance. One thing the most successful teams agree on: having a strong culture of web performance is a huge success factor.

In my talk, The 7 Habits of Highly Effective Performance Teams, I shared some tips and best practices that demonstrate how a strong culture of performance can help you:

- Prevent regression

- Reduce gatekeeping

- Increase investment from the business

MORE: Performance culture tips and best practices

3. Find out how happy your users are

How do we know if users are happy? As members of the web performance community, we’ve been thinking about the best ways to answer that question for years. Now the observability community is asking the same questions, but coming at them from the opposite side of the stack.

In her talk Observability is for User Happiness, Emily Nakashima talked about how approaching web performance through the lens of observability has changed the way her team thinks about performance instrumentation and optimization.

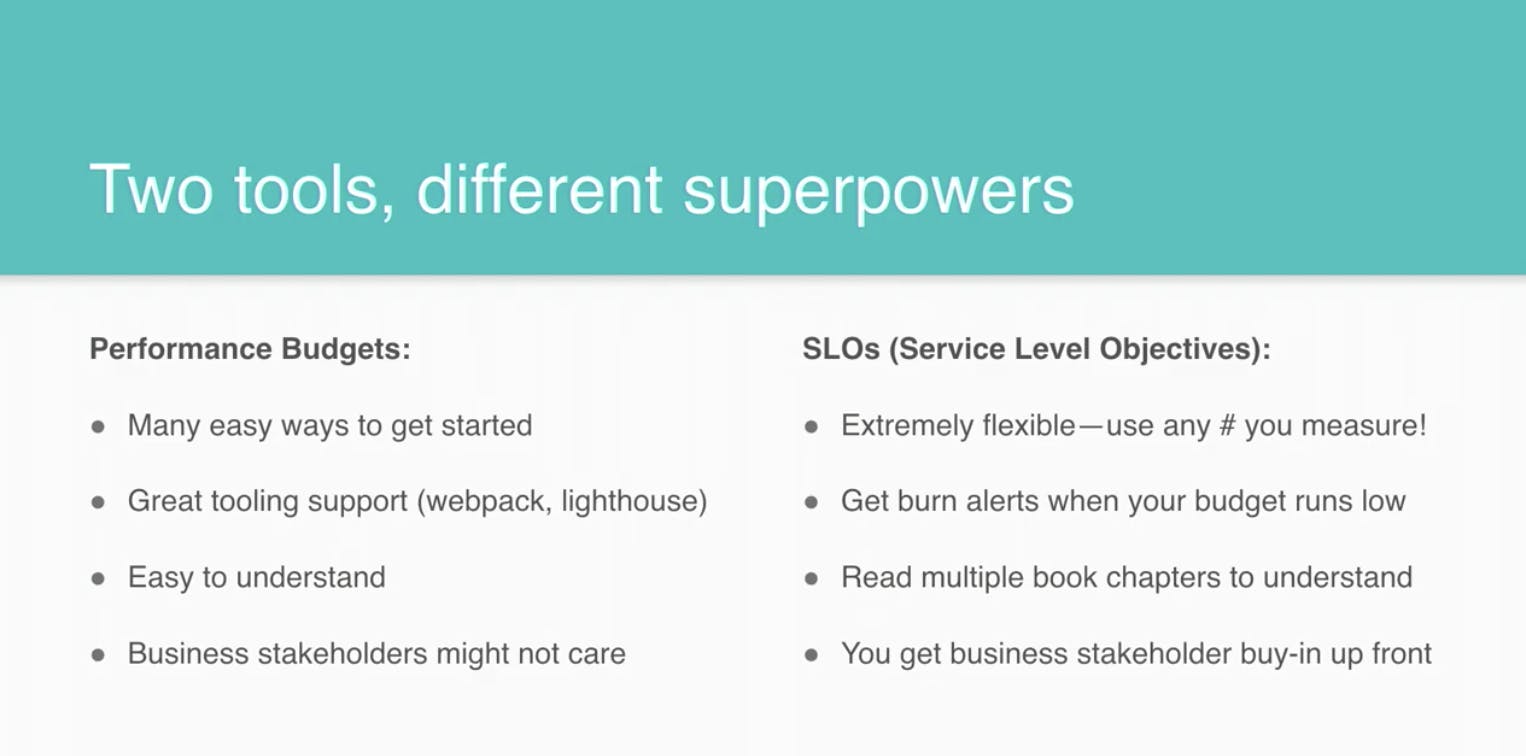

Among other things, Emily talked about how performance budgets and SLOs can help you stay accountable. Here's how you can use SpeedCurve to set performance budgets and alerts.

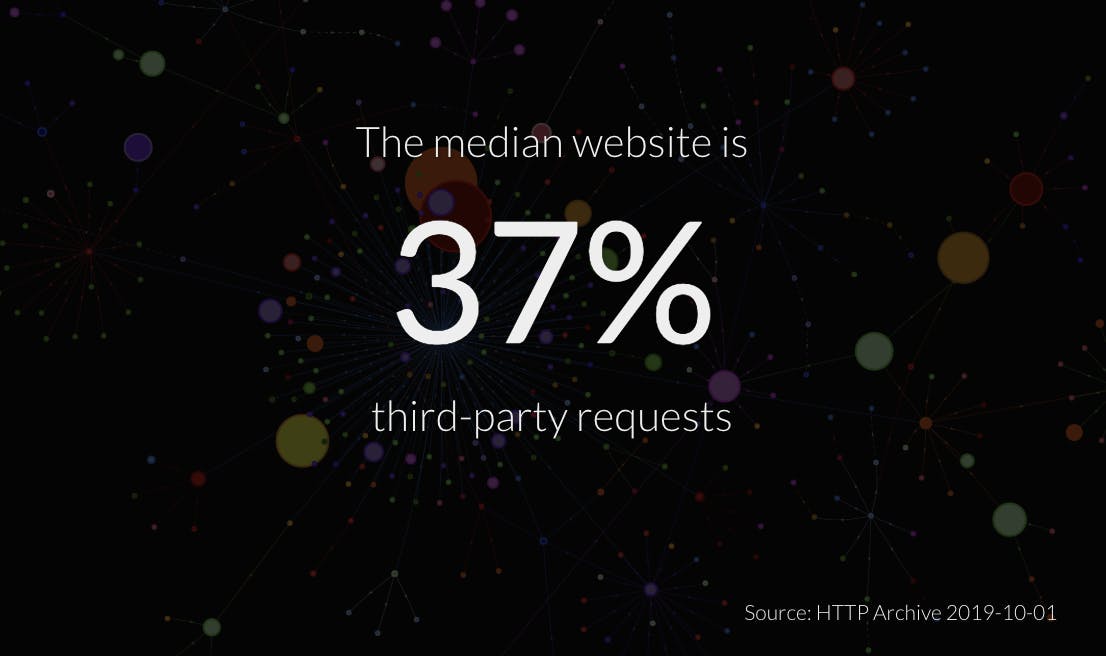

4. Get to the bottom of third-party performance

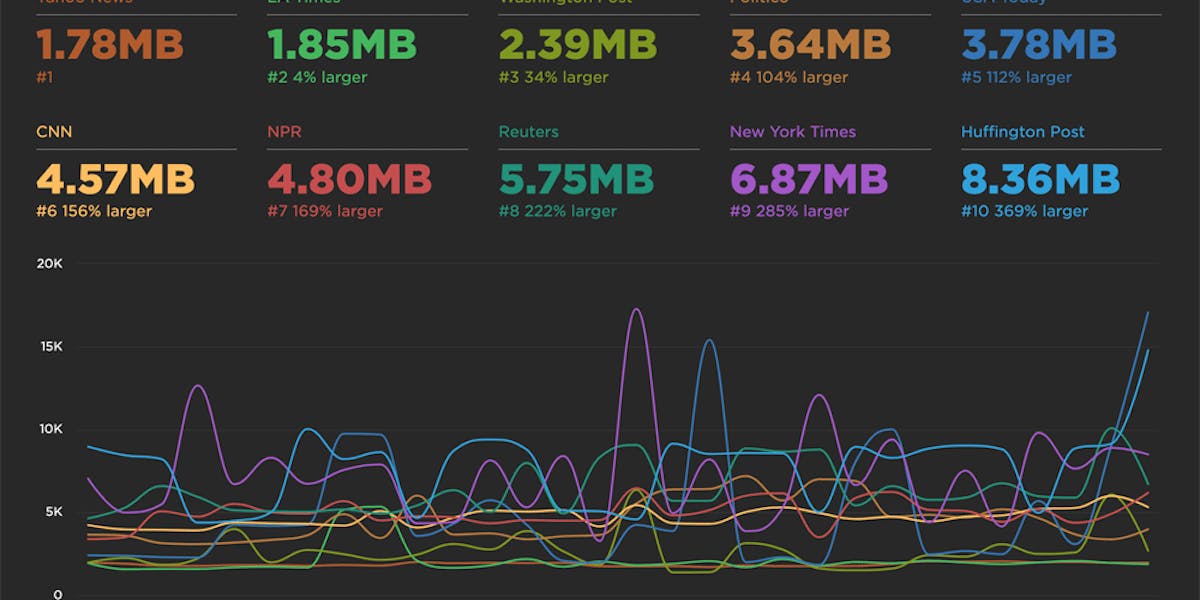

In his talk Deep Dive into Third-Party Performance, Simon Hearne covered which third-party tags have the greatest impact on user experience, how ad blockers affect site speed, and what to look out for when evaluating a new third-party service. He also shared valuable techniques to manage third-party performance and security without creating friction with your marketing and analytics teams.

Your SpeedCurve third-party dashboard gives you the ability to monitor individual third parties over time, and create performance budgets for them.

5. Reduce the JavaScript on your pages... and keep it off

JavaScript is, byte-for-byte, the most expensive resource on the web and we’re using more of it than ever in our sites. In his talk When JavaScript Bytes, Tim Kadlec shared practical ways to reduce the amount of JS you're sending your users, as well as tools and approaches to make sure those bytes stay off.

Here's how SpeedCurve can help you manage your JavaScript:

- Monitor JavaScript performance

- Measure jank and UX

- Use the RUM JS dashboard to measure Long Tasks and CPU times

- Track JS errors

6. Understand the latest performance metrics

We're obsessed with finding metrics that best represent the user experience. Largest Contentful Paint is one of the newest UX-oriented metrics on the landscape, and it looks promising. LCP measures when the largest element (usually an image or video) renders in the viewport.

Largest Contentful Paint is currently only available in Chrome, so it was fascinating to learn directly from Annie Sullivan, a member of the Chrome team, how they develop and test new metrics like LCP. Annie's talk, Lessons Learned from Performance Monitoring in Chrome, is a must-watch if you're interesting in learning about the life cycle of metrics and the rigour with which new metrics are developed and tested.

Here's how you can track Largest Contentful Paint using SpeedCurve:

- Create performance budgets for LCP in both RUM and Synthetic

- SpeedCurve's User Happiness metric (available in RUM) is an aggregate metric that includes Largest Contentful Paint

And here's our glossary of commonly used performance metrics.

What are your performance resolutions?

Do you have questions about anything I've mentioned here? Are you focusing on anything I haven't talked about? Let me know in the comments, or reach me on Twitter!